A subscription to JoVE is required to view this content. Sign in or start your free trial.

Method Article

A Balanced Test of Comprehension Versus Production Practice using Artificial Language Learning

Not Published

In This Article

Summary

The goal of this protocol is to evaluate comprehension versus production language learning training through a computer-based experiment in a way that balances inherent task differences between comprehension and production.

Abstract

Research and theories in the field of second language acquisition have long held that language comprehension is a stronger learning experience than language production, especially for learning the grammar of a second language. In contrast, psychology research shows that, at least at the single word level, the opposite is true: language production training, due to the memory processes involved, leads to better learning of words in a second language. The inherent differences between language production and comprehension were not well-balanced in prior research, potentially leading to these conflicting results. Thus, the present study’s protocol includes language comprehension and production training tasks that are balanced for listening experience, task-relevant choices, and attention. In the active production task, participants see a picture and are asked to describe it out loud. In the active comprehension task, participants see a picture and hear a phrase. They make a match/mismatch judgment on whether the phrase describes the picture or not. In both conditions, participants hear the correct description of the picture after the task. This full protocol includes computer-based language training in which participants gradually learn an artificial language, building up from single words to full sentences. Training alternates the active production or comprehension tasks with passive exposure to familiarize participants with the language. After training, participants’ learning is assessed using several tests that tap into both vocabulary and grammar learning. Versions of this protocol have been used for learning both artificial and natural languages and have consistently shown that participants in the production condition learn the language better than participants in the comprehension condition. Extensions of this protocol could be used for comparing the effects of comprehension versus production training on different language phenomena in different languages of interest.

Introduction

Language learning inevitably involves practice in both comprehension (i.e., listening) and production (i.e., speaking), skills which require different amounts of attention and rely on different memory processes. Focusing on either comprehension or production practice may yield different results in language learning because of the differences in task demands. Research in the field of second language acquisition strongly suggests that to learn the grammar of a second language, comprehension practice is more useful than production practice1,2. However, memory research suggests that production practice can boost learning compared to comprehension practice, at least at the single word level. Production practice provides an additional presentation of the material, because the speaker can hear their own pronunciation3. Because production practice demands more attention, it leads to greater depth of processing4. Production involves making task-relevant choices, because a speaker must choose what to say, and making task-relevant choices increases learning5. Finally, comprehension only involves recognition, whereas production relies on recall, which is a stronger learning experience6.

Thus, the literature paints a mixed picture of the merits of comprehension versus production training7. This may be due to two gaps in the literature that are addressed in the method presented here. First, whereas second language acquisition literature has focused on grammar learning, psychology literature has focused on single word learning. Second, most previous studies did not seek to balance production and comprehension training: while production and comprehension are innately different, some of the task demands can be balanced in order to focus on inherent processing differences.

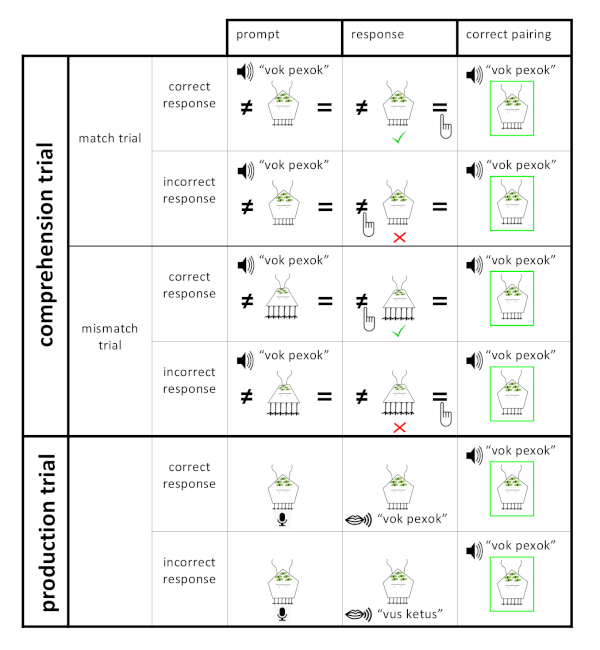

The method presented here reduces some of the differences between production and comprehension demands to allow for a more direct comparison. The core of the method is to have participants in the comprehension and production conditions practice with the same language materials in different ways. An active training trial gives participants in both conditions practice with the same target image and the target phrase describing it. Each active training trial consist of three parts: the first is a prompt that contains either the target phrase or the target image; the second is the active part, in which the participant responds to the prompt; the third presents the participant with the correct pairing of the target image and the target phrase describing it. The third part with the correct pairing is identical for participants in both conditions. Production participants start with the target image and are asked to describe it out loud in the language they are learning. Comprehension participants start with the target phrase and see an image on the screen that may or may not match the target phrase. Their active task is to make a match/mismatch judgment. Both sets of participants are passively exposed to similar language material before starting the active task, so that they have the same input to draw on for their active task. For both sets of participants, the active response to the prompt (speaking or making a match-mismatch judgment) is followed by a correct pairing of the image and the phrase that describes it.

In the two tasks contrasted here, the amount of listening experience is more balanced than in previous comparisons between comprehension and production training: comprehension participants hear the target phrase, which may or may not correctly describe the image shown on that trial, while production participants hear their own speech, which also may or may not correctly describe the target image. Both sets of participants then hear and see the target phrase and target image in the final part of the trial to ensure they can learn the language properly. Attention demands are also balanced between the two conditions: both tasks require an overt response to a picture.

This careful balancing of listening experience, task demands, and attention is not generally done in second language acquisition studies comparing production and comprehension training. The comprehension tasks used in that literature7 are similar to the comprehension training task and the comprehension tests employed after learning: error indication, multiple choice, and matching a picture with input. The crucial difference between this method and most second language acquisition studies comparing production and comprehension training lies with the production task employed. A meta-analysis indicates that in second language acquisition research, a wide range of production tasks is included in what is considered production-based instruction (i.e., anything from mechanical grammar drills to free production) without reference to vastly different task demands in these types of production7. This method, while balancing task demands, captures a key difference between speaking and listening in real life situations: the strict recall of information required for production, while comprehension only requires recognition.

This method could be used for comparing the effects of comprehension versus production training on different language phenomena in languages of interest. It has been successfully used to study grammatical agreement learning in an artificial language8 and German gender agreement in noun phrases9. Because the materials of Hopman and MacDonald are publicly available at https://osf.io/74kqe, the protocol presented in this paper follows their implementation in PsychoPy,10 but the basic contrast between the active production and comprehension trials presented here could be implemented for other languages, other grammatical phenomena, and using different experimental software.

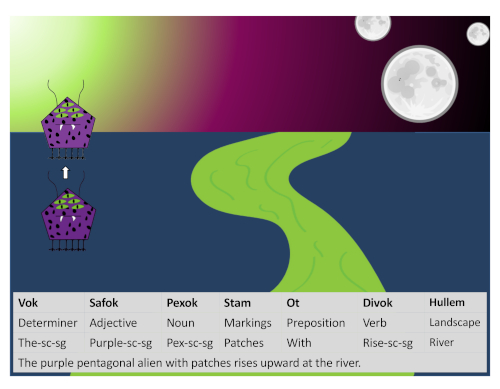

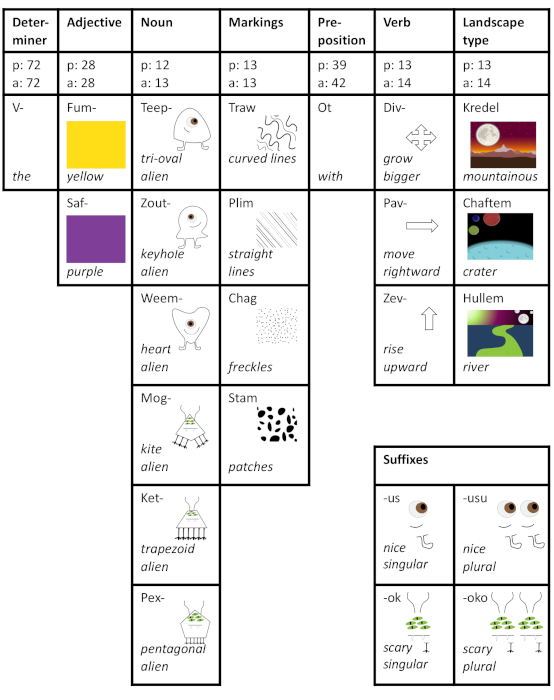

In the experiment reported here, participants learn an artificial language that describes a visual world of various aliens moving around on several landscapes. A full sentence in this language consists of 7 words of different word types that always occur in a fixed word order (Figure 1). The full language consists of 18 content words and two function words (Figure 2), and the five content word categories each have 2–6 different words, leading to 432 different possible full sentences. Four words in each full sentence (i.e., determiner, color adjective, alien noun, verb) take the same suffix. There are two different types of aliens: scary-looking aliens, characterized by a multitude of eyes and legs, sharp teeth, and angular shapes; kind-looking aliens, characterized by a single big eye, two legs, a friendly smile, and rounded shapes. Note that for counterbalancing purposes, the experiment program randomly assigns visual meanings to words within each category. One consistent mapping is used in the figures and demonstrations throughout this article, but another participant may learn for example, ‘saf’ means yellow and ‘fum’ means purple.

Figure 1: Example sentence from the alien language. This is an example of a full sentence in the alien language describing a video of the alien rising upwards. Below the sentence are the word types of all of the seven words in the sentence, a word-by-word translation into English and the full English translation as a sentence. The suffix ‘ok’ on four of the words in the sentence means this is an alien from the scary-looking (-sc) category, and that it is singular (-sg). Note that participants never see the language written out; they only hear it. This figure is adapted from Hopman and MacDonald8. Please click here to view a larger version of this figure.

Figure 2: Overview of the full alien language. The full alien language has seven different word categories, with 1–6 words per category. The second row indicates, for each word category, how often each word from that category is practiced during passive (p) and active (a) training. For each of the 18 content words, the paired visual meaning is illustrated. For all 20 words, an English translation is given. Four of the 7 word categories (i.e., determiner, color adjective, alien noun, and verb) take a suffix, which is indicated by a ‘-‘ at the end of those words. In the bottom right corner, the suffixes, which express both plurality and alien type, are illustrated. This figure is adapted from the Supplementary Materials of Hopman and MacDonald8. Please click here to view a larger version of this figure.

Participants can learn several grammatical regularities of interest in this language: First, there is the fixed word order. Second, the four (identical) suffixes that occur in a full sentence encode meaning in two different ways (see inset in Figure 2). The suffix encodes for plurality, denoting whether 1 or 2 aliens are present in the visual scene: short suffixes ‘ok’ and ‘us’ indicate singular; longer suffixes ‘oko’ and ‘usu’ indicate plural. The suffix also encodes for alien type, with ‘ok’ and ‘oko’ indicating that the alien in the image is scary-looking, and ‘us’ and ‘usu’ that it is kind-looking. Third, the exposure throughout the training paradigm is set up so that scary-looking aliens usually (on 83% of trials) occur with spotted patterns (freckles or patches) and only rarely (17% of trials) occur with striped patterns (curved or straight lines). The opposite is true for the kind-looking aliens; they usually occur with striped patterns and rarely with spotted patterns. This is also a language regularity because it creates a higher transition probability between, for example, the suffixes for scary-looking aliens and the words for spotted patterns. The experiment is set up so that there are no other co-occurrence regularities (e.g., each alien occurs equally often in each of the two colors throughout both training and testing).

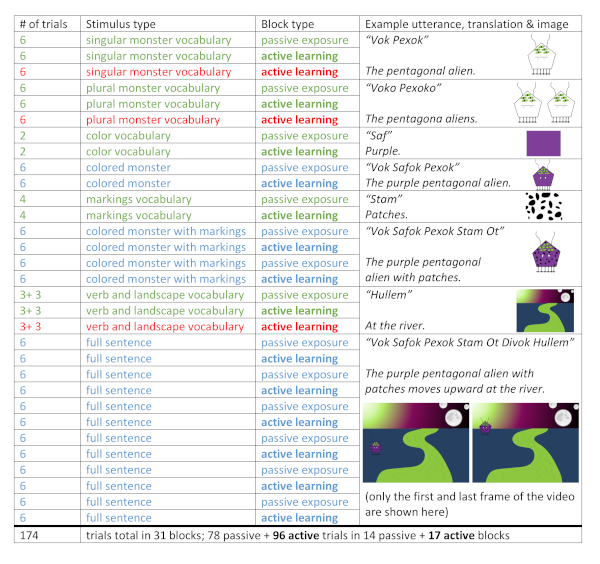

During training, participants alternate between blocks of passive exposure trials and active training trials. During passive exposure trials, participants can learn about the language by simply watching images and listening to the language. During active training blocks, participants learn the language by actively practicing it in either active production or active comprehension training trials, depending on the condition they are in. The very first block of passive trials shows each of the 6 aliens once. Then, participants get an active block in which they practice with those same 6 black and white line drawings of the aliens. Gradually, new vocabulary is introduced, and the phrases get longer and the images more complicated (Figure 3). Throughout, each passive block is followed by an active block to practice with the same types of phrases and images, and production and comprehension participants learn the language in the exact same order with the exact same training structure; the correct target phrase and image pairing on active training trials is identical. Thus, the only difference between the two conditions is that production participants practice speaking the language on their active trials, whereas comprehension participants practice understanding the language on their active trials. After training, participants in both conditions get a set of identical comprehension tests that tap into their understanding of the vocabulary and the different grammatical regularities present in the alien language.

Figure 3: Structure of the language training. Every line in this figure represents a block of training trials, with the number of trials in the block as well as the type of training (i.e., active training or passive exposure) noted. For example, the very first block of six trials shows each of the 6 different aliens once. The green lines indicate blocks that introduce new vocabulary. The number of trials in these blocks was determined by the number of different stimuli in that vocabulary category. For example, the color vocabulary block had two trials because there were two color words. The blue lines indicate blocks in which participants practiced combining multiple aspects of the language. The number of trials in these blocks was always six because there was one trial per alien to make sure that participants got equal amounts of practice with all of the different aliens. The red lines indicate an extra active learning block that was added after pilot training to help participants learn the words whenever six new vocabulary words were introduced in one passive learning block (e.g., aliens singular and plural, verbs and landscapes). This figure is adapted from the Supplementary Materials of Hopman and MacDonald8. Please click here to view a larger version of this figure.

Protocol

The following procedures were approved by the University of Wisconsin-Madison Social and Behavioral Science Institutional Review Board, and informed consent was obtained from each participant.

1. Materials

- On the computer that will be used for the experiment, download the experiment files by going to https://osf.io/74kqe/files/ in any browser.

- At the top of the list, click on the parent folder called ‘OSF Storage (United States)’.

- Click on the ‘Download as zip’ button near the top of the screen to download the entire experiment.

- Unpack all of the experiment materials by unzipping the downloaded .zip file.

- Unpack ‘slides.zip’. The folder ‘slides’ should now be populated with 11,520 image files ending in ‘.png’. If, when unzipping, Windows creates a subfolder of ‘slides’ named ‘slides’, copy all the image files from ‘slides/slides’ into the main ‘slides’ folder.

- Open ‘foldermaker.py’ in Psychopy (version 1.83.04) and click ‘Run’. The folder ‘data’ should now be populated with subfolders ‘s1’ through ‘s399’, each of which should have subfolders called ‘errortrials’ and ‘recordings’.

- Open ‘testgen.py’ in Psychopy and click ‘Run’. Open ‘data/s1’ to check that it (and all other data subfolders) now has a file called ‘fulltriallist.txt’.

- Open ‘soundgen.py’ and click ‘Run’. The folder ‘sounds/combined’ should now be populated with 2,057 sound files ending in ‘.wav’.

- Prepare the experimental computer for participants.

- Plug headphones to the computer.

- Connect an external microphone to the computer.

- Write ‘=’ and ‘≠’ on the sticky part of a post-it and use scissors to cut out the two symbols to the size of a keyboard key. Place the =-sticker on the ‘L’ key of the keyboard and the ≠-sticker on the ‘F’ key of the keyboard.

2. Artificial language learning experiment

- Test whether the experiment works by running members of the research team through both conditions.

- Open ‘experiment.py’ in Psychopy and click ‘Run’. In the first pop-up, enter any subject number bigger than 1 to use for pilot participants. For example, in this test, 2 was used for the Comprehension Condition pilot participants and 3 for the Production Condition pilot participants.

NOTE: The number 1 is reserved for programming the experiment, and so will not run the full experiment. Only use this subject number if the code of the experiment will be changed. - In the second pop-up, enter the condition number. Entering ‘1’ will run the Comprehension Training version of the experiment; entering ‘2’ will run the Production Condition of the experiment.

NOTE: Steps 2.4–2.7 explaining the language training are also illustrated in the accompanying file ‘Demo1training.pptx’. Details (e.g., how long each image appears on the screen) are described in Supplementary File 1. While the protocol provided is detailed enough to understand the procedure and the results, it is best to review the supplemental materials for a more in-depth understanding of the method to run or adapt this protocol. - Perform a passive exposure trial by having participants in both conditions start learning the artificial language with the names of the 6 different aliens in 6 passive exposure trials (one per alien), where the participant is instructed to “listen to the language and watch the pictures on the screen”.

- Perform an active Comprehension trial (i.e., Comprehension Condition only). Prompt: Have the participant see an image on the screen and after 0.5 s the audio file with the target phrase is played. Response: Instruct the participant to “indicate by pressing the button whether the audio and the picture match or not” (i.e., ‘=’ for match and ‘≠’ for mismatch). The subject will then see a red cross if the response was incorrect and a green checkmark if it was correct. See Figure 4 for an illustration of active comprehension trials with examples of correct and incorrect responses for both match and mismatch trials.

- Perform the Active Production trial (i.e., Production Condition only). Prompt: Have the participant see the target image on the screen with a microphone icon below it. Response: Instruct the participant to “describe the picture out loud in the alien language” and press ‘enter’ to indicate that they are done speaking and save the microphone recording. See Figure 4 for an illustration of active production trials with examples of a correct and incorrect response.

- Perform correct pairing (identical for both conditions). In both conditions, have the participants receive the correct pairing right after responding on their own and instruct them to “pay attention to the correct pairing”. Have participants see the target image and hear the target phrase that correctly describes it. Make it clear that a green square around the image indicates a correct pairing to ensure, participants in both conditions learn from the correct pairing irrespective of their own performance in the active task.

- Refer to Figure 3 and Supplementary 1 for details about how participants learn the artificial language by alternating the passive and active training tasks described here to progress from single word to full sentence learning.

NOTE: Steps 2.9 and 2.10, which describe the different types of comprehension tests, are also illustrated in the accompanying file ‘Demo2testing.pptx’. Different types of trials (e.g., word order errors, grammatical agreement errors, correct sentences) are described in more detail in Supplementary File 1.

Figure 4: Active training trials. All active training trials consisted of three parts: a prompt for the participant, the participant’s response, and finally a correct pairing of the target image and the target phrase describing it. Note that the final part of the active learning trial, the correct pairing, was identical for all types of active learning trials. There were two types of active comprehension trials, in which the initial image matched the target phrase (match trials) and trials in which the initial image did not match the target phrase. For each trial type, examples of a correct response and an incorrect response by the participant are shown. Please click here to view a larger version of this figure.

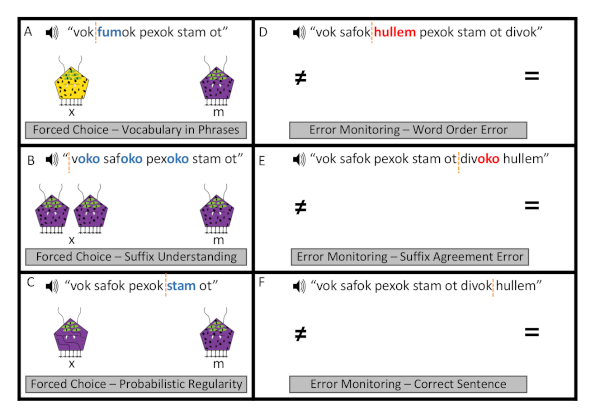

- In a Forced Choice Test trial, have the participant see two different images of the same type on the left and right sides of the screen, with ‘X’ on the screen below the left picture and ‘M’ on the screen below the right picture. The participant will hear an auditory description that matches one of the two pictures. Instruct them to “indicate by pressing either ‘M’ or ‘X’ which of the two pictures you think matches the description”. The buttons can be pressed before the end of the audio, and the trial ends immediately upon pressing the button.

NOTE: See Figure 5A–C for examples of three different types of forced choice test trials. There is a single word vocabulary test consisting of 18 items used as a prescreen, followed by the main forced choice test consisting of 66 trials total consisting of a mix of the three trial types illustrated in Figure 5A–C. - In Error Monitoring Test trials (i.e., a type of grammaticality judgement), have the participant hear a sentence and instruct them to “indicate whether the phrase is grammatical or not by pressing the appropriate button (‘=’ for grammatical, ‘≠’ for ungrammatical) as fast and accurately as possible". Sentence presentation ends immediately on pressing the button. There are 124 error monitoring test trials total with three different types of trials, all of which are intermixed and played in a random order.

NOTE: See Figure 5D–F for illustrations of all different types of error monitoring test trials.

Figure 5: Example test trials. Example forced choice test and error monitoring test trials of different types. In forced choice test trials (A–C), the participants heard a phrase and saw two images of the same type on the screen. By pressing a button they indicated which of the two images they believed was described by the auditory phrase. For each example forced choice trial, the critical word that allowed a participant to decide between the two pictures is printed in blue bold face. In error monitoring test trials (D–F) participants heard a full sentence and indicated by pressing a button whether or not they believed it was a grammatical sentence in the alien language. In the example trials with errors (D,E), the errors are printed in red bold face. In trials with grammatically correct sentences (e.g., F), participants did not know the correct answer until the final word of the sentence. For all different trial types, reaction time was counted at the start of the critical word that allowed the correct response, and this point in the phrase is indicated with an orange dotted line. Note that participants in this experiment never saw the language written out; they only heard these phrases. This figure is adapted from Hopman and MacDonald8. Please click here to view a larger version of this figure.

- After running the two pilot participants, check that the data log saved and open a log in a spreadsheet program. For example, check that the data for participant 2 is in the folder ‘s2’ and is called ‘log2.txt’. To open a .txt file in Excel, for example, right-click on File Name|Open With|Excel.

- Scroll to the bottom and check that there is a separate line on the log file for each trial. Specifically, check that at the bottom of the file column 3 (trialnr) lists ‘382’ for the final Error Monitoring trial.

- Check that below the final trial, the log lists how many test trials of each type the participant got correct. For example, check that there is a number between 0 and 18 listed below ‘total nr of correct vocabulary test trials was’.

3. Running the experiment

- Recruit participants. In this study, 125 native English speakers were recruited from psychology extra credit research pool. Based on post-hoc power simulations (see Results), it is recommended to test at least 70 participants per condition, and more if an interaction between a learning condition and within-subject predictors (e.g., item type) are of interest.

- Randomly assign participants to either the comprehension or the production condition, making sure to assign approximately the same number of participants to each condition.

- Greet the participant and have them read and sign a consent form for the study.

- Instruct participant to leave all items, including their phone, with the experimenter outside of the soundproof room with the experimental computer, headphones, and microphone.

- Instruct the participant.

- Tell them to put on the headphones and keep them on during the entire task.

- Tell them that they will be learning a language and that all of the specific instructions will appear on the computer screen.

- Point out which keys they will be using (i.e., the keys with ‘=’ and ‘≠’ stickers, ‘enter’ key, ‘M’ and ‘X’ keys) and that this information will also appear on the screen.

- If the participant is in the Production condition, set out the microphone and tell them to speak into it when prompted.

- Start the experiment by opening ‘experiment.py’ in Psychopy and clicking ‘run’. Then, enter the appropriate subject number in the first pop-up and the condition number in the second pop-up. Then, tell the participant that they will be able to go through the experiment in a self-guided manner, as described in part 2 of this protocol.

- When the participant is done with the experiment, thank them for participating and answer any questions they might have about the study.

4. Data processing

- Trim the dataset according to prespecified criteria. Record how much data are removed and why.

- If any participants behaved oddly or did not complete the experiment, remove their data. In this study, data from three participants who did not complete the experiment were removed.

- If any participants did not meet a prespecified criterion, remove their data. For example, based on pilot testing for the present study set a criterion of at least 15 out of 18 correct responses on the single word vocabulary test was set.

- Remove all trials where participants gave an incorrect response for reaction time analyses, because standard practice is to only analyze trials where participants give the correct response. Also remove outlier trials, defined in this study as trials in which a participant was slower than their own mean reaction time + three standard deviations. Finally, remove any trials with negative reaction time (if a more precise reaction time is calculated as in step 8.3 of Supplementary File 1). In this study, this left 78% of all test trial data to be used in the reaction time analysis.

Results

Average scores on the single word vocabulary test did not differ between the Production and Comprehension condition (t(120) < 1). Data from 18 participants (8 comprehension, 10 production) who did not meet this criterion were removed. All further analyses reported here include data from the remaining 52 comprehension and 52 production participants. The results did not change when the data from these 18 participants were included.

Participants trained in the Production condition re...

Discussion

A procedure for studying the role of comprehension versus production practice in learning a novel language is presented. As reported earlier in Hopman and MacDonald8, production-focused training results were found to be superior in learning an artificial language as compared to comprehension-focused training8. In follow-up research, there is accumulating evidence that production participants outperform comprehension participants in both comprehension and production accuracy...

Disclosures

The authors have nothing to disclose.

Acknowledgements

EWMH and MCM created this method and conducted the original experiment. ML wrote the first draft of this paper under supervision of EWMH. EWMH rewrote the paper based on editor and reviewer suggestions. All authors provided feedback and edits on all submitted versions of the manuscript.

AUTHOR CONTRIBUTIONS:

EWMH and MCM created this method and conducted the original experiment. ML wrote the first draft of this paper under supervision of EWMH. EWMH rewrote the paper based on editor and reviewer suggestions. All authors provided feedback and edits on all submitted versions of the manuscript.

Materials

| Name | Company | Catalog Number | Comments |

| Browser | - | - | Use for downloading the experiment onto the computer. |

| Desktop Computer | - | - | Use for presenting the experiment on; use for analyzing data. |

| Experimental software | Psychopy | - | Psychopy version 1.83.04 is used for running the experiment, it is available on github. |

| Headphones | LyxPro | - | Use for playing auditory stimuli to participants. Specifically, our lab currently uses HAS-10 over-ear open back studio headphones. |

| Microphone | Blue | - | Use for recording production participants' training trials. Specifically, our lab uses Snowball microphones. |

| Software to open spreadsheets | Microsoft Excel | - | Use for a quick view of datalogs. |

| Soundproof experiment room | - | - | Use for running participants in. |

| Statistical analysis software | R | - | Use for analyzing accuracy and reaction time data. |

| Stickers | Post-it | - | Use for marking keyboard keys used in the experiment. |

References

- Krashen, S. D., Terrell, T. . The natural approach. , (1983).

- Krashen, S. D. . Explorations in language acquisition and use. , (2003).

- MacLeod, C. M., Bodner, G. E. The production effect in memory. Current Directions in Psychological Science. 26 (4), 390-395 (2017).

- Craik, F. I. M., Tulving, E. Depth of processing and the retention of words in episodic memory. Journal of Experimental Psychology: General. 104 (3), 268-294 (1975).

- Carter, M. J., Ste-Marie, D. M. Not all choices are created equal: Task-relevant choices enhance motor learning compared to task-irrelevant choices. Psychonomic Bulletin & Review. 24 (6), 1879-1888 (2017).

- Karpicke, J. D., Roediger, H. L. The critical importance of retrieval for learning. Science. 319 (5865), 966-968 (2008).

- Shintani, N., Li, S., Ellis, R. Comprehension-based versus production-based grammar instruction: A meta-analysis of comparative studies. Language Learning. 63 (2), 296-329 (2013).

- Hopman, E. W., MacDonald, M. C. Production practice during language learning improves comprehension. Psychological Science. 29 (6), 961-971 (2018).

- Keppenne, V., Hopman, E. W. M., Jackson, C. N. Production training benefits comprehension of grammatical gender in L2 German. Talk presented at the International Symposium of Bilingualism. , (2019).

- Peirce, J. W. PsychoPy-Psychophysics software in Python. Journal of Neuroscience Methods. 162 (1-2), 8-13 (2007).

- Barr, D. J., Levy, R., Scheepers, C., Tily, H. J. Random effects structure for confirmatory hypothesis testing: Keep it maximal. Journal of Memory and Language. 68 (3), 255-279 (2013).

- Molenberghs, G., Verbeke, G. Likelihood ratio, score, and Wald tests in a constrained parameter space. American Statistician. 61 (1), 22-27 (2007).

- Luke, S. G. Evaluating significance in linear mixed-effects models in R. Behavior Research Methods. 49 (4), 1494-1502 (2017).

- Westfall, J., Kenny, D. A., Judd, C. M. Statistical power and optimal design in experiments in which samples of participants respond to samples of stimuli. Journal of Experimental Psychology: General. 143 (5), 2020-2045 (2014).

- Brysbaert, M., Stevens, M. Power analysis and effect size in mixed effects models: a tutorial. Journal of Cognition. 1 (1), 1-20 (2018).

- Cohen, J. . Statistical power analysis for the behavioral sciences, 2nd edition. , (1988).

- Shintani, N., Ellis, R. The incidental acquisition of English plural -s by Japanese children in comprehension-based and production-based lessons: a process-product study. Studies in Second Language Acquisition. 32 (4), 607-637 (2010).

- Hopman, E. W. M., Zettersten, M. Immediate feedback is critical for learning from your own productions. Poster presented at Psycholinguistics in Flanders. , (2018).

- de Leeuw, J. R. jsPsych: A JavaScript library for creating behavioral experiments in a web browser. Behavior Research Methods. 47 (1), 1-12 (2015).

Reprints and Permissions

Request permission to reuse the text or figures of this JoVE article

Request PermissionThis article has been published

Video Coming Soon

Copyright © 2025 MyJoVE Corporation. All rights reserved