A subscription to JoVE is required to view this content. Sign in or start your free trial.

Method Article

Applying Incongruent Visual-Tactile Stimuli during Object Transfer with Vibro-Tactile Feedback

In This Article

Summary

We present a protocol to apply incongruent visual-tactile stimuli during an object transfer task. Specifically, during block transfers, performed while the hand is hidden, a virtual presentation of the block shows random occurrences of false block drops. The protocol also describes adding vibrotactile feedback while performing the motor task.

Abstract

The application of incongruent sensory signals that involves disrupted tactile feedback is rarely explored, specifically with the presence of vibrotactile feedback (VTF). This protocol aims to test the effect of VTF on the response to incongruent visual-tactile stimuli. The tactile feedback is acquired by grasping a block and moving it across a partition. The visual feedback is a real-time virtual presentation of the moving block, acquired using a motion capture system. The congruent feedback is the reliable presentation of the movement of the block, so that the subject feels that the block is grasped and see it move along with the path of the hand. The incongruent feedback appears as the movement of the block diverts from the actual movement path, so that it seems to drop from the hand when it is actually still held by the subject, thereby contradicting the tactile feedback. Twenty subjects (age 30.2 ± 16.3) repeated 16 block transfers, while their hand was hidden. These were repeated with VTF and without VTF (total of 32 block transfers). Incongruent stimuli were presented randomly twice within the 16 repetitions in each condition (with and without VTF). Each subject was asked to rate the difficulty level of performing the task with and without the VTF. There were no statistically significant differences in the length of the hand paths and durations between transfers recorded with congruent and incongruent visual-tactile signals – with and without the VTF. The perceived difficulty level of performing the task with the VTF significantly correlated with the normalized path length of the block with VTF (r = 0.675, p = 0.002). This setup is used to quantify the additive or reductive value of VTF during motor function that involves incongruent visual-tactile stimuli. Possible applications are prosthetics design, smart sport-wear, or any other garments that incorporate VTF.

Introduction

Illusions are exploitations of the limitations of our senses, as we mistakenly perceive information that deviates from objective reality. Our perceptual inference is based on our experience in interpreting sensory data and on the calculation of our brain of the most reliable estimate of reality in the presence of ambiguous sensory input1.

A sub-category in the research of illusions is one that combines incongruent sensory signals. The illusion that results from incongruent sensory signals originates from the constant multisensory integration performed by our brain. While there are numerous studies concerning incongruence in visual-auditory signals, incongruence in other sensory pairs is less reported. This difference in the number of reports might be attributed to the higher simplicity in designing a setup that incorporates visual-auditory incongruence. However, studies that report results relating to other sensory pairs modalities, are interesting. For example, the effect of incongruent visual-haptic signals on visual sensitivity2 was studied using a system where the visual and haptic stimuli were matched in spatial frequency; however, the haptic and visual orientation was identical (congruent) or orthogonal (incongruent). In another study, the effect of incongruent visual-tactile motion stimuli on the perceived visual direction of motion was investigated using a visual-tactile cross-modal integration stimulator with a lighted panel that presents visual stimuli and a tactile stimulator that presents tactile motion stimuli with arbitrary motion direction, speed, and indentation depth in the skin3. It was suggested that we internally represent both the statistical distribution of the task and our sensory uncertainty, combining them in a manner consistent with a performance-optimizing Bayesian process4.

Virtual reality has made the ability to deceive the visual feedback to the subject an easy task. Several studies used multisensory virtual reality to misalign visual and somatosensory information. For example, virtual reality was recently used to induce embodiment in a child’s body, with or without activation of a child-like voice distortion5. In another example, the visual presentation of the walking distance during self-motion was extended and was therefore incongruent with the travel distance felt by body-based cues6. A similar virtual reality setup was designed for a cycling activity7. All of the aforementioned literature, however, did not combine an interference to one of the senses, in addition to the incongruent signal. We chose the tactile sense to receive such a disturbance.

Our tactile sensory system provides direct evidence as to whether an object is being grasped. We therefore expect that when direct visual feedback is distorted or unavailable, the role of the tactile sensory system in object manipulation tasks will be prominent. However, what would happen if the tactile sensory channel was also disturbed? This is a possible outcome of using vibrotactile feedback (VTF) for sensory augmentation, as it captures the attention of the individual8. Today, augmented feedback of different modalities is used as an external tool, meant to enhance our internal sensory feedback and improve performance during motor learning, in sport and in rehabilitation settings9.

The study of incongruent visual-tactile stimuli may enhance our understanding regarding perception of the sensory input. Particularly, quantification of the additive or reductive value of VTF during motor function that involves incongruent visual-tactile stimuli, can assist in future prosthetics design, smart sport-wear, or any other garments that incorporate VTF. Since amputees are deprived of tactile stimuli at the distal aspect of their residuum, their daily usage of the VTF, embedded in the prosthetic to convey knowledge of grasping, for example, might influence how they perceive visual feedback. Understanding of the mechanism of perception under these conditions, will allow engineers to perfect VTF modalities to reduce the negative effect on VTF users.

We aimed to test the effect of VTF on the response to incongruent visual-tactile stimuli. In the presented setup, the tactile feedback is acquired by grasping a block and moving it across a partition; the visual feedback is a real-time virtual presentation of the moving block and the partition (acquired using a motion capture system). Since the subject is prevented from seeing the actual hand movement, the only visual feedback is the virtual one. The congruent feedback is the reliable presentation of the movement of the block, so that the subject feels that the block is grasped and sees it move along with the path of the hand. The incongruent feedback appears as the movement of the block diverts from the actual movement path, so that it seems to drop from the hand when it is actually still held by the subject, thereby contradicting the tactile feedback. Three hypotheses were tested: when moving an object from one place to another using virtual visual feedback, (i) the path and duration of the object’s transfer motion will increase when incongruent visual-tactile stimuli is presented, (ii) this change will increase when incongruent visual-tactile stimuli is presented and VTF is activated on the moving arm, and (iii) a positive correlation will be found between the perceived difficulty level of performing the task with the VTF activated and the path and duration of the object’s transfer motion. The first hypothesis originates from aforementioned literature that report that various modalities of incongruent feedback affect our responses. The second hypothesis relates to the previous findings that VTF captures the attention of the individual. For the third hypothesis, we assumed that subjects who were more disturbed by the VTF, will trust the virtual visual feedback more than their tactile sense.

Access restricted. Please log in or start a trial to view this content.

Protocol

The following protocol follows the guidelines of human research ethics committee of the university. See Table of Materials for reference to the commercial products.

NOTE: After receiving approval of the university ethics committee, 20 healthy individuals (7 males and 13 females, mean and standard deviation [SD] of age 30.2 ± 16.3 years) were recruited. Each subject read and signed an informed consent form pretrial. Inclusion criteria were right-handed individuals aged 18 or above. Exclusion criteria were any neurological or orthopaedic impairment affecting the upper extremities or uncorrected sight impairment. The subjects were naïve to the occurrences of incongruent visual-tactile feedback.

1. Pre-trial preparation

- Use the wooden box from the box and blocks test10. The dimensions of the box are 53.7 cm x 26.9 cm x 8.5 cm and in the middle of it, is a 15.2 cm high partition. Place a soft sponge layer on both sides of the partition. Place six passive reflective markers on the aspect opposite to the screen, at the four corners and on both ends of the partition (Figure 1a).

- Use a 3D-printer to manufacture a cube with the dimensions of 2.5 cm x 2.5 cm x 2.5 cm, attached to a base with the dimensions of 4.5 cm x 4.5 cm x 1 cm. Before printing, cut each corner of the base to create a square of size 1 cm x 1 cm at each corner (Figure 1a). Attach passive reflective markers on the four corners of the base.

- Place a large screen approximately 1.5 m in front of a table, so that a subject, standing behind the table, is approximately 2 m from the screen. Place the box on the table, 10 cm from the edge opposite to the screen.

- Use a 6-camera motion capture system, activated at 100 Hz, with a plug-in to visualize the partition and the movement of the block in real-time (Figure 1). Calibrate the motion capture system, according to the guidelines of the manufacturer, so that the block and partition of the box are recognized as rigid bodies.

NOTE: Proper calibration of the motion capture system and usage of small markers that are firmly attached to the block and partition are required to maintain the illusion.

2. Placing the vibrotactile feedback system on the subject

NOTE: The VTF system described herein was previously published11,12,13,14.

- Instruct the subject to remove wristwatch, bracelets and rings. Attach the VTF system controller to the forearm of the subject (Figure 2, left image).

- Attach two thin and flexible force sensors to the palmar aspect of the thumb and index fingers over a thin spongy layer (Figure 2, right image).

- Place a cuff on the skin of the upper arm of the subject (Figure 2, left image) and use the fastener to close the cuff comfortably. The cuff will contain three vibrotactile actuators activated via an open-source electronic prototyping platform at a frequency of 233 Hz in a linear relation to the force perceived by the force sensors. The force sensors and vibrotactile actuators are connected to the open-source electronic prototyping platform via shielded electric wires.

3. VTF activation

- Press the button to activate the battery attached to the controller (Figure 2, left image).

- Ask the subject to press the force sensor’s instrumented fingers (i.e., the thumb and index fingers) together lightly. Note that the subject will report a sense of vibration at the area under the cuff.

- Instruct the subject to train for 10 min in grasping the block as lightly as possible, using only the two instrumented fingers. Ask the subject to lift the block, move it, and place it back on the table several times, attempting to apply a minimal amount of force on the block. Encourage the subject to attempt to reduce the applied force, even if the block is dropped during grasping.

4. Positioning and preparing the subject

- Instruct the subject to stand close to the table (up to 10 cm from it), where the box and partition are placed.

- Place a divider at the edge of the table near the subject and above the box, so that the subject is unable to see the box but can easily see the screen in front of him or her (Figure 1a). For the divider, use a hard non-reflective material, preferably wood, fixed on a four legs, which permit the adjustment of their height, to accommodate subjects of different heights.

- Instruct the subject to place the earphones on his or her head.

- Place the block in the middle of the right compartment of the box and guide the hand of the subject to it.

5. Commencing trial

NOTE: The described trial is repeated twice, with and without the VTF (a cross-over design is recommended to verify a no learning effect). To perform the trial without the VTF, turn off the battery attached to the controller (Figure 2).

- Activate the software controlling the cameras of the motion capture system.

- In the control panel of the visual feedback software (Figure 1b), select with/without VTF, type the code of the subject, click Run, Connect, Open and Start.

- Instruct the subject to perform 16 repetitions of transferring the block with the force sensor’s instrumented hand while viewing the movement of the virtual block on the screen (Figure 1b). After each transfer, move the block back across the partition to its starting location.

- After the subject completed 16 repetitions, click Stop.

- Ask the subject to rate the difficulty level of performing the task of transferring the block 16 times twice, with and without the VTF, according to the following scale: ‘0’ (not difficult at all), '1' (slightly difficult), '2' (moderately difficult), '3' (very difficult), and ‘4’ (extremely difficult).

6. Post analysis

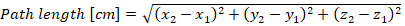

- Use the 3D coordinate data of the block the compute the path of the block and its transfer time. Mark the onset and offset time of each transfer manually as when the block is at the height of the rims of the right (onset) and then left (offset) sides of the box. Calculate the path length of each transfer according to the following equation:

(1)

where and

and  are the 3D coordinate of the block in two subsequent time points.

are the 3D coordinate of the block in two subsequent time points. - For both conditions, with and without VTF, average the path length and transfer time once for the two transfers with incongruent visual-tactile signals and once for the 14 transfers with the congruent visual-tactile signals.

- Normalized the path and time during block transfer in the presence of incongruent visual-tactile signals by the path and time during block transfer with the presence of congruent visual-tactile signals. Perform the normalization separately for the two conditions (with and without VTF).

- Perform a within-subject repeated-measures ANOVA with two factors: VTF (with and without) and incongruent visual-tactile feedback (with and without).

- If there are no statistical differences when analyzing the results following the instructions in subsection 6.4, use Bayesian repeated measures ANOVAs with two factors15.

- Use the Spearman’s correlation test with the perceived difficulty level of performing the task with the VTF activated and with the normalized path and duration of the motion

- Set the statistical significance to p < .05.

Access restricted. Please log in or start a trial to view this content.

Results

We used the described technique to test the three hypotheses that when moving an object from one place to another using virtual visual feedback: (i) the path and duration of the object’s transfer motion will increase when incongruent visual-tactile stimuli is presented; (ii) this change will increase when incongruent visual-tactile stimuli is presented and VTF is activated on the moving arm; and (iii) a positive correlation will be found between the perceived difficulty level of performing the task with the VTF act...

Access restricted. Please log in or start a trial to view this content.

Discussion

In this study, a protocol that quantifies the effect of adding VTF on the object transfer kinematics in the presence of incongruent visual-tactile stimuli was presented. To the best of our knowledge, this is the only protocol available to test the effect of VTF on the response to incongruent visual-tactile stimuli. The several critical steps involved in the application of incongruent visual-tactile stimuli during object transfer with VTF include the following: attaching the VTF system to the subject, activating the VTF, ...

Access restricted. Please log in or start a trial to view this content.

Disclosures

The authors have nothing to disclose.

Acknowledgements

This study was not funded.

Access restricted. Please log in or start a trial to view this content.

Materials

| Name | Company | Catalog Number | Comments |

| 3D printer | Makerbot | https://www.makerbot.com/ | |

| Box and Blocks test | Sammons Preston | https://www.performancehealth.com/box-and-blocks-test | |

| Flexiforce sensors (1lb) | Tekscan Inc. | https://www.tekscan.com/force-sensors | |

| JASP | JASP Team | https://jasp-stats.org/ | |

| Labview | National Instruments | http://www.ni.com/en-us/shop/labview/labview-details.html | |

| Micro Arduino | Arduino LLC | https://store.arduino.cc/arduino-micro | |

| Motion capture system | Qualisys | https://www.qualisys.com | |

| Shaftless vibration motor | Pololu | https://www.pololu.com/product/1638 | |

| SPSS | IBM | https://www.ibm.com/analytics/spss-statistics-software |

References

- Aggelopoulos, N. C. Perceptual inference. Neuroscience and Biobehavioral Reviews. 55, 375-392 (2015).

- van der Groen, O., van der Burg, E., Lunghi, C., Alais, D. Touch influences visual perception with a tight orientation-tuning. PloS One. 8 (11), e79558(2013).

- Pei, Y. C., et al. Cross-modal sensory integration of visual-tactile motion information: instrument design and human psychophysics. Sensors. 13 (6), Basel, Switzerland. 7212-7223 (2013).

- Kording, K. P., Wolpert, D. M. Bayesian integration in sensorimotor learning. Nature. 427 (6971), 244-247 (2004).

- Tajadura-Jimenez, A., Banakou, D., Bianchi-Berthouze, N., Slater, M. Embodiment in a Child-Like Talking Virtual Body Influences Object Size Perception, Self-Identification, and Subsequent Real Speaking. Scientific Reports. 7 (1), (2017).

- Campos, J. L., Butler, J. S., Bulthoff, H. H. Multisensory integration in the estimation of walked distances. Experimental Brain Research. 218 (4), 551-565 (2012).

- Sun, H. J., Campos, J. L., Chan, G. S. Multisensory integration in the estimation of relative path length. Experimental Brain Research. 154 (2), 246-254 (2004).

- Parmentier, F. B., Ljungberg, J. K., Elsley, J. V., Lindkvist, M. A behavioral study of distraction by vibrotactile novelty. Journal of Experimental Psychology, Human Perception, and Performance. 37 (4), 1134-1139 (2011).

- Sigrist, R., Rauter, G., Riener, R., Wolf, P. Augmented visual, auditory, haptic, and multimodal feedback in motor learning: a review. Psychonomic Bulletin & Review. 20 (1), 21-53 (2013).

- Hebert, J. S., Lewicke, J., Williams, T. R., Vette, A. H. Normative data for modified Box and Blocks test measuring upper-limb function via motion capture. Journal of Rehabilitation Research and Development. 51 (6), 918-932 (2014).

- Raveh, E., Portnoy, S., Friedman, J. Adding vibrotactile feedback to a myoelectric-controlled hand improves performance when online visual feedback is disturbed. Human Movement Science. 58, 32-40 (2018).

- Raveh, E., Friedman, J., Portnoy, S. Evaluation of the effects of adding vibrotactile feedback to myoelectric prosthesis users on performance and visual attention in a dual-task paradigm. Clinical Rehabilitation. 32 (10), 1308-1316 (2018).

- Raveh, E., Portnoy, S., Friedman, J. Myoelectric Prosthesis Users Improve Performance Time and Accuracy Using Vibrotactile Feedback When Visual Feedback Is Disturbed. Archives of Physical Medicine and Rehabilitation. , (2018).

- Raveh, E., Friedman, J., Portnoy, S. Visuomotor behaviors and performance in a dual-task paradigm with and without vibrotactile feedback when using a myoelectric controlled hand. Assistive Technology: The Official Journal of RESNA. , 1-7 (2017).

- Dienes, Z. Using Bayes to get the most out of non-significant results. Frontiers in Psychology. 5, 781(2014).

- Shams, L. Early Integration and Bayesian Causal Inference in Multisensory Perception. The Neural Bases of Multisensory Processes. Murray, M. M., Wallace, M. T. , Taylor & Francis Group, LLC. Boca Raton (FL). (2012).

- D'Amour, S., Pritchett, L. M., Harris, L. R. Bodily illusions disrupt tactile sensations. Journal of Experimental Psychology, Human Perception, and Performance. 41 (1), 42-49 (2015).

- Tidoni, E., Fusco, G., Leonardis, D., Frisoli, A., Bergamasco, M., Aglioti, S. M. Illusory movements induced by tendon vibration in right- and left-handed people. Experimental Brain Research. 233 (2), 375-383 (2015).

- Fuentes, C. T., Gomi, H., Haggard, P. Temporal features of human tendon vibration illusions. The European Journal of Neuroscience. 36 (12), 3709-3717 (2012).

- de Vignemont, F., Ehrsson, H. H., Haggard, P. Bodily illusions modulate tactile perception. Current Biology. 15 (14), 1286-1290 (2005).

- Marotta, A., Tinazzi, M., Cavedini, C., Zampini, M., Fiorio, M. Individual Differences in the Rubber Hand Illusion Are Related to Sensory Suggestibility. PloS One. 11 (12), e0168489(2016).

- Stevenson, R. A., Zemtsov, R. K., Wallace, M. T. Individual differences in the multisensory temporal binding window predict susceptibility to audiovisual illusions. Journal of Experimental Psychology, Human Perception, and Performance. 38 (6), 1517-1529 (2012).

- Maravita, A., Spence, C., Driver, J. Multisensory integration and the body schema: close to hand and within reach. Current Biology. 13 (13), R531-R539 (2003).

- Carey, D. P. Multisensory integration: attending to seen and felt hands. Current Biology. 10 (23), R863-R865 (2000).

- Tsakiris, M., Haggard, P. The rubber hand illusion revisited: visuotactile integration and self-attribution. Journal of Experimental Psychology, Human Perception, and Performance. 31 (1), 80-91 (2005).

Access restricted. Please log in or start a trial to view this content.

Reprints and Permissions

Request permission to reuse the text or figures of this JoVE article

Request PermissionThis article has been published

Video Coming Soon

Copyright © 2025 MyJoVE Corporation. All rights reserved