È necessario avere un abbonamento a JoVE per visualizzare questo. Accedi o inizia la tua prova gratuita.

Method Article

Rete neurale profonda end-to-end per il rilevamento di oggetti salienti in ambienti complessi

In questo articolo

Riepilogo

Il presente protocollo descrive un nuovo algoritmo end-to-end di rilevamento di oggetti salienti. Sfrutta le reti neurali profonde per migliorare la precisione del rilevamento di oggetti salienti all'interno di contesti ambientali intricati.

Abstract

Il rilevamento di oggetti salienti è emerso come una fiorente area di interesse nell'ambito della visione artificiale. Tuttavia, gli algoritmi prevalenti mostrano una precisione ridotta quando hanno il compito di rilevare oggetti salienti all'interno di ambienti intricati e sfaccettati. Alla luce di questa pressante preoccupazione, questo articolo presenta una rete neurale profonda end-to-end che mira a rilevare oggetti salienti all'interno di ambienti complessi. Lo studio introduce una rete neurale profonda end-to-end che mira a rilevare oggetti salienti all'interno di ambienti complessi. Comprendendo due componenti interconnesse, vale a dire una rete convoluzionale completa multiscala a livello di pixel e una rete di codificatori-decodificatori profondi, la rete proposta integra la semantica contestuale per produrre contrasto visivo attraverso mappe di caratteristiche multiscala, impiegando al contempo caratteristiche di immagini profonde e poco profonde per migliorare l'accuratezza dell'identificazione dei confini degli oggetti. L'integrazione di un modello di campo casuale condizionale (CRF) completamente connesso migliora ulteriormente la coerenza spaziale e la delineazione dei contorni delle mappe salienti. L'algoritmo proposto è ampiamente valutato rispetto a 10 algoritmi contemporanei sui database SOD e ECSSD. I risultati della valutazione dimostrano che l'algoritmo proposto supera altri approcci in termini di precisione e accuratezza, stabilendo così la sua efficacia nel rilevamento di oggetti salienti all'interno di ambienti complessi.

Introduzione

Il rilevamento di oggetti salienti imita l'attenzione visiva umana, identificando rapidamente le regioni chiave dell'immagine e sopprimendo le informazioni di sfondo. Questa tecnica è ampiamente impiegata come strumento di pre-elaborazione in attività come il ritaglio delle immagini1, la segmentazione semantica2 e l'editing delle immagini3. Semplifica attività come la sostituzione dello sfondo e l'estrazione del primo piano, migliorando l'efficienza e la precisione dell'editing. Inoltre, aiuta nella segmentazione semantica migliorando la localizzazione del target. Il potenziale del rilevamento di oggetti salienti per migliorare l'efficienza computazionale e conservare la memoria sottolinea le sue significative prospettive di ricerca e applicazione.

Nel corso degli anni, il rilevamento di oggetti salienti si è evoluto dagli algoritmi tradizionali iniziali all'incorporazione di algoritmi di deep learning. L'obiettivo di questi progressi è stato quello di ridurre il divario tra il rilevamento di oggetti salienti e i meccanismi visivi umani. Ciò ha portato all'adozione di modelli di rete convoluzionali profondi per lo studio del rilevamento di oggetti salienti. Borji et al.4 hanno riassunto e generalizzato la maggior parte degli algoritmi tradizionali classici, che si basano sulle caratteristiche sottostanti dell'immagine. Nonostante alcuni miglioramenti nell'accuratezza del rilevamento, l'esperienza manuale e la cognizione continuano a rappresentare una sfida per il rilevamento di oggetti salienti in ambienti complessi.

L'uso delle reti neurali convoluzionali (CNN) è prevalente nel dominio del rilevamento di oggetti salienti. In questo contesto, le reti neurali convoluzionali profonde vengono utilizzate per gli aggiornamenti del peso attraverso l'apprendimento autonomo. Le reti neurali convoluzionali sono state impiegate per estrarre la semantica contestuale dalle immagini attraverso l'uso di livelli convoluzionali e pooling a cascata, consentendo l'apprendimento di caratteristiche complesse dell'immagine a livelli più alti, che hanno una maggiore capacità di discriminazione e caratterizzazione per il rilevamento di oggetti salienti in ambienti diversi.

Nel 2016, le reti neurali completamente convoluzionali5 hanno guadagnato una trazione significativa come approccio popolare per il rilevamento di oggetti salienti, sulla base del quale i ricercatori hanno iniziato il rilevamento di oggetti salienti a livello di pixel. Molti modelli sono solitamente costruiti su reti esistenti (ad esempio, VGG166, ResNet7), con l'obiettivo di migliorare la rappresentazione dell'immagine e rafforzare l'effetto del rilevamento dei bordi.

Liu et al.8 hanno utilizzato una rete neurale già addestrata come struttura per calcolare l'immagine a livello globale e quindi hanno perfezionato il confine dell'oggetto utilizzando una rete gerarchica. La combinazione delle due reti forma la rete finale di profonda salienza. Ciò è stato ottenuto inserendo la mappa saliente precedentemente acquisita nella rete come conoscenza preliminare in modo ripetitivo. Zhang et al.9 hanno fuso efficacemente le informazioni semantiche e spaziali delle immagini utilizzando reti profonde con trasferimento bidirezionale di informazioni da strati superficiali a profondi e profondi e da profondi a superficiali, rispettivamente. Il rilevamento di oggetti salienti utilizzando un modello di apprendimento reciproco è stato proposto da Wu et al.10. Il modello utilizza informazioni in primo piano e sui bordi all'interno di una rete neurale convoluzionale per facilitare il processo di rilevamento. Li et al.11 hanno impiegato l'"algoritmo del buco" delle reti neurali per affrontare la sfida di fissare i campi recettivi di diversi strati nelle reti neurali profonde nel contesto del rilevamento di oggetti salienti. Tuttavia, la segmentazione dei super-pixel viene utilizzata per l'acquisizione dei bordi degli oggetti, aumentando notevolmente lo sforzo computazionale e il tempo di calcolo. Ren et al.12 hanno ideato una rete di codificatori-decodificatori multiscala per rilevare oggetti salienti e hanno utilizzato reti neurali convoluzionali per combinare efficacemente caratteristiche profonde e superficiali. Sebbene la sfida della sfocatura dei confini nel rilevamento degli oggetti sia risolta attraverso questo approccio, la fusione multiscala delle informazioni si traduce inevitabilmente in un aumento delle esigenze computazionali.

La revisione della letteratura13 propone che il rilevamento della salienza, dai metodi tradizionali ai metodi di deep learning, sia riassunto e che l'evoluzione del rilevamento del target di salienza dalle sue origini all'era del deep learning possa essere vista molto chiaramente. In letteratura sono stati proposti vari modelli di rilevamento di oggetti salienti basati su RGB-D con buone prestazioni14. La letteratura di cui sopra esamina e classifica i vari tipi di algoritmi per il rilevamento degli oggetti di salienza e ne descrive gli scenari applicativi, i database utilizzati e le metriche di valutazione. Questo articolo fornisce anche un'analisi qualitativa e quantitativa degli algoritmi proposti per quanto riguarda i database e le metriche di valutazione suggeriti.

Tutti gli algoritmi di cui sopra hanno ottenuto risultati notevoli nei database pubblici, fornendo una base per il rilevamento di oggetti salienti in ambienti complessi. Sebbene ci siano stati numerosi risultati di ricerca in questo campo sia a livello nazionale che internazionale, ci sono ancora alcune questioni da affrontare. (1) Gli algoritmi tradizionali non di deep learning tendono ad avere una bassa precisione a causa della loro dipendenza da caratteristiche etichettate manualmente come colore, consistenza e frequenza, che possono essere facilmente influenzate dall'esperienza e dalla percezione soggettiva. Di conseguenza, la precisione delle loro capacità di rilevamento degli oggetti salienti è diminuita. Il rilevamento di oggetti salienti in ambienti complessi utilizzando algoritmi tradizionali di apprendimento non profondo è impegnativo a causa della loro difficoltà nel gestire scenari intricati. (2) I metodi convenzionali per il rilevamento di oggetti salienti mostrano un'accuratezza limitata a causa della loro dipendenza da caratteristiche etichettate manualmente come colore, consistenza e frequenza. Inoltre, il rilevamento a livello di area può essere dispendioso dal punto di vista computazionale, spesso ignorando la coerenza spaziale e tende a rilevare male i contorni degli oggetti. Questi problemi devono essere affrontati per migliorare la precisione del rilevamento degli oggetti salienti. (3) Il rilevamento di oggetti salienti in ambienti complessi rappresenta una sfida per la maggior parte degli algoritmi. La maggior parte degli algoritmi di rilevamento degli oggetti salienti deve affrontare sfide serie a causa dell'ambiente di rilevamento degli oggetti salienti sempre più complesso con sfondi variabili (colori di sfondo e di primo piano simili, trame di sfondo complesse, ecc.), molte incertezze come dimensioni incoerenti degli oggetti di rilevamento e la definizione poco chiara dei bordi di primo piano e sfondo.

La maggior parte degli algoritmi attuali mostra una bassa precisione nel rilevamento di oggetti salienti in ambienti complessi con colori di sfondo e di primo piano simili, trame di sfondo complesse e bordi sfocati. Sebbene gli attuali algoritmi degli oggetti salienti basati sul deep learning dimostrino una maggiore precisione rispetto ai metodi di rilevamento tradizionali, le caratteristiche dell'immagine sottostante che utilizzano non sono ancora in grado di caratterizzare efficacemente le caratteristiche semantiche, lasciando spazio per miglioramenti nelle loro prestazioni.

In sintesi, questo studio propone una rete neurale profonda end-to-end per un algoritmo di rilevamento di oggetti salienti, con l'obiettivo di migliorare l'accuratezza del rilevamento di oggetti salienti in ambienti complessi, migliorare i bordi di destinazione e caratterizzare meglio le caratteristiche semantiche. I contributi di questo documento sono i seguenti: (1) La prima rete impiega VGG16 come rete di base e modifica i suoi cinque livelli di pooling utilizzando l'algoritmo del "foro"11. La rete neurale multiscala a livello di pixel completamente convoluzionale apprende le caratteristiche dell'immagine da diverse scale spaziali, affrontando la sfida dei campi recettivi statici attraverso vari strati di reti neurali profonde e migliorando l'accuratezza del rilevamento in aree di messa a fuoco significative nel campo. (2) I recenti sforzi per migliorare l'accuratezza del rilevamento di oggetti salienti si sono concentrati sull'utilizzo di reti neurali più profonde, come VGG16, per estrarre sia le caratteristiche di profondità dalla rete di codificatori che le caratteristiche superficiali dalla rete di decodificatori. Questo approccio migliora in modo efficace l'accuratezza del rilevamento dei contorni degli oggetti e migliora le informazioni semantiche, in particolare in ambienti complessi con sfondi variabili, dimensioni degli oggetti incoerenti e confini indistinti tra primo piano e sfondo. (3) I recenti sforzi per migliorare la precisione del rilevamento di oggetti salienti hanno enfatizzato l'uso di reti più profonde, tra cui VGG16, per estrarre caratteristiche profonde dalla rete di codificatori e caratteristiche superficiali dalla rete di decodificatori. Questo approccio ha dimostrato un migliore rilevamento dei confini degli oggetti e maggiori informazioni semantiche, specialmente in ambienti complessi con sfondi, dimensioni degli oggetti e confini indistinti tra il primo piano e lo sfondo. Inoltre, è stata implementata l'integrazione di un modello di campo casuale condizionale (CRF) completamente connesso per aumentare la coerenza spaziale e la precisione dei contorni delle mappe salienti. L'efficacia di questo approccio è stata valutata su set di dati SOD e ECSSD con background complessi ed è risultata statisticamente significativa.

Lavoro correlato

Fu et al.15 hanno proposto un approccio congiunto che utilizza l'RGB e il deep learning per il rilevamento di oggetti salienti. Lai et al.16 hanno introdotto un modello debolmente supervisionato per il rilevamento di oggetti salienti, imparando la salienza dalle annotazioni, utilizzando principalmente etichette scarabocchiate per risparmiare tempo di annotazione. Sebbene questi algoritmi presentassero una fusione di due reti complementari per il rilevamento di oggetti di salienza, mancano di un'indagine approfondita sul rilevamento di salienza in scenari complessi. Wang et al.17 hanno progettato una fusione iterativa a due modalità delle caratteristiche della rete neurale, sia dal basso verso l'alto che dall'alto verso il basso, ottimizzando progressivamente i risultati dell'iterazione precedente fino alla convergenza. Zhang et al.18 hanno fuso efficacemente l'informazione semantica e spaziale dell'immagine utilizzando reti profonde con trasferimento bidirezionale di informazioni rispettivamente da strati superficiali a profondi e da profondi a superficiali. Il rilevamento di oggetti salienti utilizzando un modello di apprendimento reciproco è stato proposto da Wu et al.19. Il modello utilizza informazioni in primo piano e sui bordi all'interno di una rete neurale convoluzionale per facilitare il processo di rilevamento. Questi modelli di rilevamento di oggetti salienti basati su reti neurali profonde hanno raggiunto prestazioni notevoli su set di dati disponibili pubblicamente, consentendo il rilevamento di oggetti salienti in scene naturali complesse. Ciononostante, la progettazione di modelli ancora più avanzati rimane un obiettivo importante in questo campo di ricerca e funge da motivazione principale per questo studio.

Quadro generale

La rappresentazione schematica del modello proposto, come illustrato nella Figura 1, è derivata principalmente dall'architettura VGG16, che incorpora sia una rete neurale multiscala completamente convoluzionale (DCL) a livello di pixel che una rete di codificatori-decodificatori profondi (DEDN). Il modello elimina tutti i pooling finali e gli strati completamente connessi di VGG16, adattandosi al contempo alle dimensioni dell'immagine di input di L × A. Il meccanismo operativo prevede l'elaborazione iniziale dell'immagine in ingresso tramite il DCL, facilitando l'estrazione di feature profonde, mentre le feature superficiali sono ottenute dalle reti DEDN. L'amalgama di queste caratteristiche viene successivamente sottoposta a un modello di campo casuale condizionale (CRF) completamente connesso, aumentando la coerenza spaziale e l'accuratezza del contorno delle mappe di salienza prodotte.

Per accertare l'efficacia del modello, è stato sottoposto a test e convalida su set di dati SOD20 e ECSSD21 con sfondi intricati. Dopo che l'immagine di input passa attraverso il DCL, vengono ottenute diverse mappe di caratteristiche in scala con vari campi recettivi e la semantica contestuale viene combinata per produrre una mappa saliente W × H con coerenza interdimensionale. Il DCL impiega una coppia di livelli convoluzionali con kernel 7 x 7 per sostituire il livello di pooling finale della rete VGG16 originale, migliorando la conservazione delle informazioni spaziali nelle mappe delle caratteristiche. Questo, combinato con la semantica contestuale, produce una mappa saliente W × H con coerenza interdimensionale. Allo stesso modo, la Deep Encoder-Decoder Network (DEDN) utilizza livelli convoluzionali con 3 x 3 kernel nei decodificatori e un singolo livello convoluzionale dopo l'ultimo modulo di decodifica. Sfruttando le caratteristiche profonde e superficiali dell'immagine, è possibile generare una mappa saliente con una dimensione spaziale di W × H, affrontando la sfida dei confini indistinti degli oggetti. Lo studio descrive una tecnica pionieristica per il rilevamento di oggetti salienti che amalgama i modelli DCL e DEDN in una rete unificata. I pesi di queste due reti profonde vengono appresi attraverso un processo di addestramento e le mappe di salienza risultanti vengono unite e quindi perfezionate utilizzando un campo casuale condizionale (CRF) completamente connesso. L'obiettivo principale di questo perfezionamento è migliorare la coerenza spaziale e la localizzazione delle curve di livello.

Rete neurale multiscala completamente convoluzionale a livello di pixel

L'architettura VGG16 originariamente consisteva in cinque livelli di pooling, ciascuno con uno stride di 2. Ogni livello di pooling comprime le dimensioni dell'immagine per aumentare il numero di canali, ottenendo più informazioni contestuali. Il modello DCL si ispira alla letteratura13 ed è un miglioramento rispetto al framework di VGG16. In questo articolo viene utilizzato un modello DCL11 a livello di pixel, come mostrato nella Figura 2 all'interno dell'architettura di VGG16, una rete neurale convoluzionale profonda. I quattro livelli di pooling massimi iniziali sono interconnessi con tre kernel. Il primo kernel è 3 × 3 × 128; il secondo kernel è 1 × 1 × 128; e il terzo kernel è 1 × 1 × 1. Per ottenere una dimensione uniforme delle mappe di funzionalità dopo i quattro livelli di pooling iniziali, collegati a tre kernel, con ogni dimensione equivalente a un ottavo dell'immagine originale, la dimensione del passo del primo kernel connesso a questi quattro livelli di pooling più grandi è impostata rispettivamente su 4, 2, 1 e 1.

Per preservare il campo recettivo originale nei diversi kernel, viene utilizzato l'"algoritmo hole" proposto in letteratura11 per estendere la dimensione del kernel aggiungendo zeri, mantenendo così l'integrità del kernel. Queste quattro mappe di funzionalità sono collegate al primo kernel con diverse dimensioni di passo. Di conseguenza, le mappe delle caratteristiche prodotte nella fase finale possiedono dimensioni identiche. Le quattro mappe delle caratteristiche costituiscono un insieme di caratteristiche multi-scala ottenute da scale distinte, ognuna delle quali rappresenta dimensioni variabili dei campi recettivi. Le mappe delle caratteristiche risultanti ottenute dai quattro livelli intermedi sono concatenate con la mappa delle caratteristiche finale derivata da VGG16, generando così un'uscita a 5 canali. L'output risultante viene successivamente sottoposto a un kernel 1 × 1 × 1 con la funzione di attivazione del sigma, producendo infine la mappa saliente (con una risoluzione di un ottavo dell'immagine originale). L'immagine viene sovracampionata e ingrandita utilizzando l'interpolazione bilineare, assicurando che l'immagine risultante, denominata mappa di salienza, mantenga una risoluzione identica a quella dell'immagine iniziale.

Rete di encoder-decoder profonda

Allo stesso modo, la rete VGG16 viene utilizzata come rete backbone. VGG16 è caratterizzato da un basso numero di canali di feature map poco profondi ma ad alta risoluzione e da un elevato numero di canali di feature profondi ma a bassa risoluzione. Il pooling dei layer e il downsampling aumentano la velocità di calcolo della rete profonda al costo di ridurre la risoluzione della mappa delle caratteristiche. Per risolvere questo problema, seguendo l'analisi in letteratura14, la rete di codificatori viene utilizzata per modificare la connettività completa dell'ultimo livello di pooling nel VGG16 originale. Questa modifica comporta la sostituzione con due strati convoluzionali con 7 × 7 kernel (i kernel convoluzionali più grandi aumentano il campo recettivo). Entrambi i kernel di convoluzione sono dotati di un'operazione di normalizzazione (BN) e di un'unità lineare modificata (ReLU). Questa regolazione si traduce in una mappa delle funzioni di output del codificatore che conserva meglio le informazioni sullo spazio immagine.

Mentre il codificatore migliora la semantica dell'immagine di alto livello per la localizzazione globale degli oggetti salienti, il problema della sfocatura dei contorni dell'oggetto saliente non viene migliorato in modo efficace. Per affrontare questo problema, le caratteristiche profonde vengono fuse con le caratteristiche superficiali, ispirate al lavoro di rilevamento dei bordi12, proponendo il modello di rete encoder-decoder (DEDN) come mostrato nella Figura 3. L'architettura del codificatore comprende tre kernel interconnessi con i quattro iniziali, mentre il decodificatore migliora sistematicamente la risoluzione della mappa delle funzionalità utilizzando i valori massimi recuperati dai livelli di pooling massimi.

In questa metodologia innovativa per il rilevamento di oggetti salienti, durante la fase di decodifica, viene utilizzato uno strato convoluzionale con un kernel 3 × 3 in combinazione con uno strato di normalizzazione batch e un'unità lineare adattata. Al termine del modulo di decodifica finale all'interno dell'architettura del decodificatore, viene impiegato uno strato convoluzionale a canale solitario per ottenere una mappa saliente delle dimensioni spaziali W × H. La mappa saliente viene generata attraverso una fusione collaborativa del modello codificatore-decodificatore, che produce il risultato, e la fusione complementare dei due, cioè la fusione complementare di informazioni profonde e informazioni superficiali. In questo modo non solo si ottiene una localizzazione accurata dell'oggetto saliente e si aumenta il campo recettivo, ma si preservano anche efficacemente le informazioni dettagliate dell'immagine e si rafforza il confine dell'oggetto saliente.

Meccanismo di integrazione

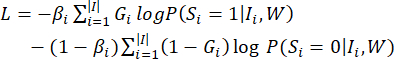

L'architettura del codificatore comprende tre kernel, associati ai quattro livelli di pooling massimi iniziali del modello VGG16. Al contrario, il decodificatore è intenzionalmente formulato per aumentare progressivamente la risoluzione delle mappe delle caratteristiche acquisite dai layer di up-sampling sfruttando i valori massimi ottenuti dai corrispondenti layer di pooling. Nel decodificatore viene quindi utilizzato uno strato convoluzionale che utilizza un kernel 3 x 3, uno strato di normalizzazione batch e un'unità lineare modificata, seguito da uno strato convoluzionale a canale singolo per generare una mappa saliente delle dimensioni L × A. I pesi delle due reti profonde vengono appresi attraverso cicli di allenamento alternati. I parametri della prima rete sono stati mantenuti fissi, mentre i parametri della seconda rete sono stati sottoposti ad addestramento per un totale di cinquanta cicli. Durante il processo, i pesi della mappa di salienza (S1 e S2) utilizzati per la fusione vengono aggiornati tramite un gradiente casuale. La funzione di perdita11 è:

(1)

(1)

Nell'espressione data, il simbolo G rappresenta il valore etichettato manualmente, mentre W indica l'insieme completo dei parametri di rete. Il peso βi funge da fattore di bilanciamento per regolare la proporzione di pixel salienti rispetto a pixel non salienti nel processo di calcolo.

L'immagine I è caratterizzata da tre parametri: |I|, |Io|- e |Io|+, che rappresentano rispettivamente il numero totale di pixel, il numero di pixel non salienti e il numero di pixel salienti.

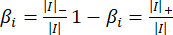

Poiché le mappe salienti ottenute dalle due reti di cui sopra non considerano la coerenza dei pixel vicini, viene utilizzato un modello di raffinamento della salienza a livello di pixel completamente connesso CRF15 per migliorare la coerenza spaziale. L'equazione energetica11 è la seguente, risolvendo il problema dell'etichettatura dei pixel binari.

(2)

(2)

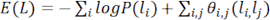

dove L indica l'etichetta binaria (valore saliente o valore non saliente) assegnata a tutti i pixel. La variabile P(li) denota la probabilità che a un dato pixel xivenga assegnata un'etichetta specifica li, indicando la probabilità che il pixel xisia salienza. All'inizio, P(1) = Sie P(0) = 1 - Si, dove Sidenota il valore di salienza al pixel xiall'interno della mappa di salienza fusa S. θi,j(li,l j) è il potenziale a coppie, definito come segue.

(3)

(3)

Tra questi, se li≠ lj, allora μ(li,l j) = 1, altrimenti μ(li,l j) = 0. Il calcolo di θi,j comporta l'utilizzo di due kernel, dove il kernel iniziale dipende sia dalla posizione del pixel P che dall'intensità del pixel I. Ciò si traduce nella vicinanza di pixel con colori simili che mostrano valori di salienza comparabili. I due parametri, σα e σβ, regolano la misura in cui la somiglianza dei colori e la vicinanza spaziale influenzano il risultato. L'obiettivo del secondo kernel è quello di eliminare piccole regioni isolate. La minimizzazione dell'energia si ottiene attraverso un filtraggio ad alta dimensionalità, che accelera il campo medio della distribuzione del campo casuale condizionale (CRF). Al momento del calcolo, la mappa saliente indicata come Scrf mostra una maggiore coerenza spaziale e contorno per quanto riguarda gli oggetti salienti rilevati.

Configurazioni sperimentali

In questo articolo, una rete profonda per il rilevamento di bersagli salienti basata sulla rete neurale VGG16 viene costruita utilizzando Python. Il modello proposto viene confrontato con altri metodi utilizzando i set di dati SOD20 e ECSSD21 . Il database di immagini SOD è noto per i suoi sfondi complessi e disordinati, la somiglianza nei colori tra primo piano e sfondo e le piccole dimensioni degli oggetti. A ogni immagine in questo set di dati viene assegnato un valore true etichettato manualmente per la valutazione delle prestazioni sia quantitativa che qualitativa. D'altra parte, il set di dati ECSSD è costituito principalmente da immagini provenienti da Internet, con scene naturali più complesse e realistiche con un basso contrasto tra lo sfondo dell'immagine e gli oggetti salienti.

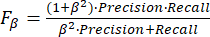

Gli indici di valutazione utilizzati per confrontare il modello in questo documento includono la curva Precision-Recall comunemente usata, Fβe EMAE. Per valutare quantitativamente la mappa di salienza prevista, la curva Precision-Recall (P-R)22 viene impiegata alterando la soglia da 0 a 255 per binarizzare la mappa di salienza. Fβè una metrica di valutazione completa, calcolata con le equazioni di precisione e richiamo derivate dalla mappa dei salienti binarizzati e da una mappa dei valori reali.

(4)

(4)

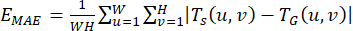

dove β è il parametro del peso per regolare la precisione e il richiamo, impostando β2 = 0.3. Il calcolo di EMAEequivale a calcolare l'errore assoluto medio tra la mappa di salienza risultante e la mappa di verità di base, come definito dall'espressione matematica che segue:

(5)

(5)

Sia Ts(u,v) il valore estratto dei pixel salienti della mappa (u,v) e sia TG(u,v) il valore corrispondente dei pixel reali della mappa (u,v).

Protocollo

1. Impostazione e procedura sperimentale

- Caricare il modello VGG16 pre-addestrato.

NOTA: Il primo passaggio consiste nel caricare il modello VGG16 pre-addestrato dalla libreria Keras6.- Per caricare un modello VGG16 pre-addestrato in Python utilizzando le librerie di deep learning più diffuse come PyTorch (vedi Tabella dei materiali), segui questi passaggi generali:

- Importa torcia. Importate torchvision.models come modelli.

- Caricare il modello VGG16 pre-addestrato. vgg16_model = models.vgg16(pretrained=True).

- Assicurarsi che il riepilogo del modello VGG16 sia "print(vgg16_model)".

- Per caricare un modello VGG16 pre-addestrato in Python utilizzando le librerie di deep learning più diffuse come PyTorch (vedi Tabella dei materiali), segui questi passaggi generali:

- Definire i modelli DCL e DEDN.

- Per lo pseudo-codice dell'algoritmo DCL, fornire Input: Image dataset SOD e Output: modello DCL addestrato.

- Inizializzare il modello DCL con la rete backbone VGG16.

- Pre-elaborazione del set di dati dell'immagine D (ad esempio, ridimensionamento, normalizzazione).

- Suddividere il set di dati in set di training e di convalida.

- Definire la funzione di perdita per l'addestramento del modello DCL (ad esempio, l'entropia incrociata binaria).

- Impostare gli iperparametri per il training: Velocità di apprendimento (0,0001), Numero di epoche di training impostate (50), La dimensione del batch è (8), Ottimizzatore (Adam).

- Eseguire il training del modello DCL: per ogni epoca nel numero definito di epoche, eseguire l'operazione per ogni batch nel set di training. Immettere quanto segue:

- Passaggio in avanti: invia le immagini batch al modello DCL. Calcola la perdita utilizzando le mappe di salienza previste e le mappe di verità sul campo.

- Passaggio all'indietro: aggiorna i parametri del modello utilizzando l'estremità della discesa del gradiente. Calcolare la perdita di convalida e altre metriche di valutazione all'estremità del set di convalida.

- Salvare il modello DCL sottoposto a training.

- Restituire il modello DCL sottoposto a training.

- Per lo pseudo-codice per l'algoritmo DEDN, input: Set di dati di immagini (X), Mappe di salienza della verità di base (Y), Numero di iterazioni di addestramento (N).

- Per la rete di codificatori, assicurarsi che l'encoder sia basato sullo scheletro VGG16 con le modifiche (come indicato di seguito).

NOTA: encoder_input = Input(shape=input_shape)

encoder_conv1 = Conv2D(64, (3, 3), activation='relu', padding='same')(encoder_input)

encoder_pool1 = MaxPooling2D((2, 2))(encoder_conv1)

encoder_conv2 = Conv2D(128, (3, 3), activation='relu', padding='same')(encoder_pool1)

encoder_pool2 = MaxPooling2D((2, 2))(encoder_conv2)

encoder_conv3 = Conv2D(256, (3, 3), activation='relu', padding='same')(encoder_pool2)

encoder_pool3 = MaxPooling2D((2, 2))(encoder_conv3) - Per la rete di decodifica, assicurarsi che il decodificatore sia basato sullo scheletro VGG16 con le modifiche (come indicato di seguito).

NOTA: decoder_conv1 = Conv2D(256, (3, 3), activation='relu', padding='same')(encoder_pool3)

decoder_upsample1 = Sottocampionamento2D((2, 2))(decoder_conv1)

decoder_conv2 = Conv2D(128, (3, 3), activation='relu', padding='same')(decoder_upsample1)

decoder_upsample2 = Campionamento2D((2, 2))(decoder_conv2)

decoder_conv3 = Conv2D(64, (3, 3), activation='relu', padding='same')(decoder_upsample2)

decoder_upsample3 = Campionamento2D((2, 2))(decoder_conv3)

decoder_output = Conv2D(1, (1, 1), activation='sigmoid', padding='same')(decoder_upsample3)

- Per la rete di codificatori, assicurarsi che l'encoder sia basato sullo scheletro VGG16 con le modifiche (come indicato di seguito).

- Definire il modello DEDN. model = Modello (ingressi = encoder_input, uscite = decoder_output).

- Compilare il modello. model.compile (ottimizzatore = Adam, perdita = binary_crossentropy).

- Selezionare il ciclo di allenamento.

NOTA: Per l'iterazione nell'intervallo (N): # Selezionare in modo casuale un batch di immagini e mappe di verità sul campo; batch_X, batch_Y = randomly_select_batch(X, Y, batch_size).- Eseguire il training del modello nel batch. perdita = model.train_on_batch(batch_X, batch_Y). Stampare la perdita per il monitoraggio.

- Salvare il modello sottoposto a training. model.save ('dedn_model.h5').

- Per lo pseudo-codice dell'algoritmo DCL, fornire Input: Image dataset SOD e Output: modello DCL addestrato.

- Combinare.

- Combina gli output delle reti DCL e DEDN e perfeziona la mappa di salienza utilizzando un modello CRF (Conditional Random Field) completamente connesso.

2. Elaborazione delle immagini

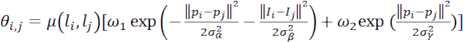

- Fare clic su Esegui codice per visualizzare l'interfaccia GUI (Figura 4).

- Fare clic su Apri immagine per selezionare il percorso e quindi l'immagine da rilevare.

- Fare clic sull'immagine visualizzata per visualizzare l'immagine selezionata per il rilevamento.

- Fare clic su Avvia rilevamento per rilevare l'immagine selezionata.

NOTA: Il risultato del rilevamento apparirà con l'immagine rilevata, cioè il risultato dell'oggetto saliente (Figura 5). - Fare clic su selezionare il percorso di salvataggio per salvare i risultati dell'immagine del rilevamento dell'oggetto saliente.

Risultati

Questo studio introduce una rete neurale profonda end-to-end che comprende due reti complementari: una rete multiscala a livello di pixel completamente convoluzionale e una rete di codificatori-decodificatori profondi. La prima rete integra la semantica contestuale per derivare i contrasti visivi dalle mappe delle caratteristiche multiscala, affrontando la sfida dei campi recettivi fissi nelle reti neurali profonde su diversi livelli. La seconda rete utilizza le caratteristiche delle immagini profonde e poco profonde per...

Discussione

L'articolo introduce una rete neurale profonda end-to-end specificamente progettata per il rilevamento di oggetti salienti in ambienti complessi. La rete è composta da due componenti interconnessi: una rete multiscala completamente convoluzionale (DCL) a livello di pixel e una rete di codifica-decodificatore profondo (DEDN). Questi componenti lavorano in sinergia, incorporando la semantica contestuale per generare contrasti visivi all'interno di mappe di caratteristiche multiscala. Inoltre, sfruttano le caratteristiche ...

Divulgazioni

Gli autori non hanno nulla da rivelare.

Riconoscimenti

Questo lavoro è supportato dall'istituzione del programma di finanziamento del progetto di finanziamento del progetto di ricerca scientifica chiave degli istituti di istruzione superiore provinciali dell'Henan del 2024 (numero di progetto: 24A520053). Questo studio è supportato anche dalla costruzione di corsi dimostrativi caratteristici per la creazione e l'integrazione specializzata nella provincia di Henan.

Materiali

| Name | Company | Catalog Number | Comments |

| Matlab | MathWorks | Matlab R2016a | MATLAB's programming interface provides development tools for improving code quality maintainability and maximizing performance. It provides tools for building applications using custom graphical interfaces. It provides tools for combining MATLAB-based algorithms with external applications and languages |

| Processor | Intel | 11th Gen Intel(R) Core (TM) i5-1135G7 @ 2.40GHz | 64-bit Win11 processor |

| Pycharm | JetBrains | PyCharm 3.0 | PyCharm is a Python IDE (Integrated Development Environment) a list of required python: modulesmatplotlib skimage torch os time pydensecrf opencv glob PIL torchvision numpy tkinter |

| PyTorch | PyTorch 1.4 | PyTorch is an open source Python machine learning library , based on Torch , used for natural language processing and other applications.PyTorch can be viewed both as the addition of GPU support numpy , but also can be viewed as a powerful deep neural network with automatic derivatives . |

Riferimenti

- Wang, W. G., Shen, J. B., Ling, H. B. A deep network solution for attention and aesthetics aware photo cropping. IEEE Transactions on Pattern Analysis and Machine Intelligence. 41 (7), 1531-1544 (2018).

- Wang, W. G., Sun, G. L., Gool, L. V. Looking beyond single images for weakly supervised semantic segmentation learning. IEEE Transactions on Pattern Analysis and Machine. , (2022).

- Mei, H. L., et al. Exploring dense context for salient object detection. IEEE Transactions on Circuits and Systems for Video Technology. 32 (3), 1378-1389 (2021).

- Borji, A., Itti, L. State-of-the-art in visual attention modeling. IEEE Transactions on Pattern Analysis and Machine Intelligence. 35 (1), 185-207 (2012).

- Long, J., Shelhamer, E., Darrell, T. Fully convolutional networks for semantic segmentation. , 3431-3440 (2015).

- Simonyan, K., Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv preprint. , 1409-1556 (2014).

- He, K., Zhang, X., Ren, S., Sun, J. Deep residual learning for image recognition. , 770-778 (2016).

- Liu, N., Han, J. Dhsnet: Deep hierarchical saliency network for salient object detection. , 678-686 (2016).

- Zhang, L., Dai, J., Lu, H., He, Y., Wang, G. A bi-directional message passing model for salient object detection. , 1741-1750 (2018).

- Wu, R., et al. A mutual learning method for salient object detection with intertwined multi-supervision. , 8150-8159 (2019).

- Li, G., Yu, Y. Deep contrast learning for salient object detection. , 478-487 (2019).

- Ren, Q., Hu, R. Multi-scale deep encoder-decoder network for salient object detection. Neurocomputing. 316, 95-104 (2018).

- Wang, W. G., et al. Salient object detection in the deep learning era: An in-depth survey. IEEE Transactions on Pattern Analysis and Machine Intelligence. 44 (6), 3239-3259 (2021).

- Zhou, T., et al. RGB-D salient object detection: A survey. Computational Visual Media. 7, 37-69 (2021).

- Fu, K., et al. Siamese network for RGB-D salient object detection and beyond. IEEE Transactions on Pattern Analysis and Machine Intelligence. 44 (9), 5541-5559 (2021).

- Lai, Q., et al. Weakly supervised visual saliency prediction. IEEE Transactions on Image Processing. 31, 3111-3124 (2022).

- Zhang, L., Dai, J., Lu, H., He, Y., Wang, G. A bi-directional message passing model for salient object detection. , 1741-1750 (2018).

- Wu, R. A mutual learning method for salient object detection with intertwined multi-supervision. , 8150-8159 (2019).

- Wang, W., Shen, J., Dong, X., Borji, A., Yang, R. Inferring salient objects from human fixations. IEEE Transactions on Pattern Analysis and Machine Intelligence. 42 (8), 1913-1927 (2019).

- Movahedi, V., Elder, J. H. Design and perceptual validation of performance measures for salient object segmentation. , 49-56 (2010).

- Shi, J., Yan, Q., Xu, L., Jia, J. Hierarchical image saliency detection on extended CSSD. IEEE Transactions on Pattern Analysis and Machine Intelligence. 38 (4), 717-729 (2015).

- Achanta, R., Hemami, S., Estrada, F., Susstrunk, S. Frequency-tuned salient region detection. , 1597-1604 (2009).

- Yang, C., Zhang, L., Lu, H., Ruan, X., Yang, M. H. Saliency detection via graph-based manifold ranking. , 3166-3173 (2013).

- Wei, Y., et al. Geodesic saliency using background priors. Computer Vision-ECCV 2012. , 29-42 (2012).

- Margolin, R., Tal, A., Zelnik-Manor, L. What makes a patch distinct. , 1139-1146 (2013).

- Perazzi, F., Krähenbühl, P., Pritch, Y., Hornung, A. Saliency filters: Contrast based filtering for salient region detection. , 733-740 (2012).

- Hou, X., Harel, J., Koch, C. Image signature: Highlighting sparse salient regions. IEEE Transactions on Pattern Analysis and Machine Intelligence. 34 (1), 194-201 (2011).

- Jiang, H., et al. Salient object detection: A discriminative regional feature integration approach. , 2083-2090 (2013).

- Li, G., Yu, Y. Visual saliency based on multiscale deep features. , 5455-5463 (2015).

- Lee, G., Tai, Y. W., Kim, J. Deep saliency with encoded low level distance map and high-level features. , 660-668 (2016).

- Liu, N., Han, J. Dhsnet: Deep hierarchical saliency network for salient object detection. , 678-686 (2016).

Ristampe e Autorizzazioni

Richiedi autorizzazione per utilizzare il testo o le figure di questo articolo JoVE

Richiedi AutorizzazioneThis article has been published

Video Coming Soon