A subscription to JoVE is required to view this content. Sign in or start your free trial.

Method Article

Tracking Rats in Operant Conditioning Chambers Using a Versatile Homemade Video Camera and DeepLabCut

In This Article

Summary

This protocol describes how to build a small and versatile video camera, and how to use videos obtained from it to train a neural network to track the position of an animal inside operant conditioning chambers. This is a valuable complement to standard analyses of data logs obtained from operant conditioning tests.

Abstract

Operant conditioning chambers are used to perform a wide range of behavioral tests in the field of neuroscience. The recorded data is typically based on the triggering of lever and nose-poke sensors present inside the chambers. While this provides a detailed view of when and how animals perform certain responses, it cannot be used to evaluate behaviors that do not trigger any sensors. As such, assessing how animals position themselves and move inside the chamber is rarely possible. To obtain this information, researchers generally have to record and analyze videos. Manufacturers of operant conditioning chambers can typically supply their customers with high-quality camera setups. However, these can be very costly and do not necessarily fit chambers from other manufacturers or other behavioral test setups. The current protocol describes how to build an inexpensive and versatile video camera using hobby electronics components. It further describes how to use the image analysis software package DeepLabCut to track the status of a strong light signal, as well as the position of a rat, in videos gathered from an operant conditioning chamber. The former is a great aid when selecting short segments of interest in videos that cover entire test sessions, and the latter enables analysis of parameters that cannot be obtained from the data logs produced by the operant chambers.

Introduction

In the field of behavioral neuroscience, researchers commonly use operant conditioning chambers to assess a wide range of different cognitive and psychiatric features in rodents. While there are several different manufacturers of such systems, they typically share certain attributes and have an almost standardized design1,2,3. The chambers are generally square- or rectangle-shaped, with one wall that can be opened for placing animals inside, and one or two of the remaining walls containing components such as levers, nose-poke openings, reward trays, response wheels and lights of various kinds1,2,3. The lights and sensors present in the chambers are used to both control the test protocol and track the animals’ behaviors1,2,3,4,5. The typical operant conditioning systems allow for a very detailed analysis of how the animals interact with the different operanda and openings present in the chambers. In general, any occasions where sensors are triggered can be recorded by the system, and from this data users can obtain detailed log files describing what the animal did during specific steps of the test4,5. While this provides an extensive representation of an animal’s performance, it can only be used to describe behaviors that directly trigger one or more sensors4,5. As such, aspects related to how the animal positions itself and moves inside the chamber during different phases of the test are not well described6,7,8,9,10. This is unfortunate, as such information can be valuable for fully understanding the animal’s behavior. For example, it can be used to clarify why certain animals perform poorly on a given test6, to describe the strategies that animals might develop to handle difficult tasks6,7,8,9,10, or to appreciate the true complexity of supposedly simple behaviors11,12. To obtain such articulate information, researchers commonly turn to manual analysis of videos6,7,8,9,10,11.

When recording videos from operant conditioning chambers, the choice of camera is critical. The chambers are commonly located in isolation cubicles, with protocols frequently making use of steps where no visible light is shining3,6,7,8,9. Therefore, the use of infra-red (IR) illumination in combination with an IR-sensitive camera is necessary, as it allows visibility even in complete darkness. Further, the space available for placing a camera inside the isolation cubicle is often very limited, meaning that one benefits strongly from having small cameras that use lenses with a wide field of view (e.g., fish-eye lenses)9. While manufacturers of operant conditioning systems can often supply high-quality camera setups to their customers, these systems can be expensive and do not necessarily fit chambers from other manufacturers or setups for other behavioral tests. However, a notable benefit over using stand-alone video cameras is that these setups can often interface directly with the operant conditioning systems13,14. Through this, they can be set up to only record specific events rather than full test sessions, which can greatly aid in the analysis that follows.

The current protocol describes how to build an inexpensive and versatile video camera using hobby electronics components. The camera uses a fisheye lens, is sensitive to IR illumination and has a set of IR light emitting diodes (IR LEDs) attached to it. Moreover, it is built to have a flat and slim profile. Together, these aspects make it ideal for recording videos from most commercially available operant conditioning chambers as well as other behavioral test setups. The protocol further describes how to process videos obtained with the camera and how to use the software package DeepLabCut15,16 to aid in extracting video sequences of interest as well as tracking an animal’s movements therein. This partially circumvents the draw-back of using a stand-alone camera over the integrated solutions provided by operant manufacturers of conditioning systems, and offers a complement to manual scoring of behaviors.

Efforts have been made to write the protocol in a general format to highlight that the overall process can be adapted to videos from different operant conditioning tests. To illustrate certain key concepts, videos of rats performing the 5-choice serial reaction time test (5CSRTT)17 are used as examples.

Protocol

All procedures that include animal handling have been approved by the Malmö-Lund Ethical committee for animal research.

1. Building the video camera

NOTE: A list of the components needed for building the camera is provided in the Table of Materials. Also refer to Figure 1, Figure 2, Figure 3, Figure 4, Figure 5.

- Attach the magnetic metal ring (that accompanies the fisheye lens package) around the opening of the camera stand (Figure 2A). This will allow the fisheye lens to be placed in front of the camera.

- Attach the camera module to the camera stand (Figure 2B). This will give some stability to the camera module and offer some protection to the electronic circuits.

- Open the camera ports on the camera module and microcomputer (Figure 1) by gently pulling on the edges of their plastic clips (Figure 2C).

- Place the ribbon cable in the camera ports, so that the silver connectors face the circuit boards (Figure2C). Lock the cable in place by pushing in the plastic clips of the camera ports.

- Place the microcomputer in the plastic case and insert the listed micro SD card (Figure 2D).

NOTE: The micro SD card will function as the microcomputer’s hard drive and contains a full operating system. The listed micro SD card comes with an installation manager preinstalled on it (New Out Of Box Software (NOOBS). As an alternative, one can write an image of the latest version of the microcomputer’s operating system (Raspbian or Rasberry Pi OS) to a generic micro SD card. For aid with this, please refer to official web resources18. It is preferable to use a class 10 micro SD card with 32 Gb of storage space. Larger SD cards might not be fully compatible with the listed microcomputer. - Connect a monitor, keyboard and a mouse to the microcomputer, and then connect its power supply.

- Follow the steps as prompted by the installation guide to perform a full installation of the microcomputer’s operating system (Raspbian or Rasberry Pi OS). When the microcomputer has booted, ensure that it is connected to internet either through an ethernet cable or Wi-Fi.

- Follow the steps outlined below to update the microcomputer’s preinstalled software packages.

- Open a terminal window (Figure 3A).

- Type “sudo apt-get update” (excluding quotation marks) and press the Enter key (Figure 3B). Wait for the process to finish.

- Type “sudo apt full-upgrade” (excluding quotation marks) and press enter. Make button responses when prompted and wait for the process to finish.

- Under the Start menu, select Preferences and Raspberry Pi configurations (Figure 3C). In the opened window, go to the Interfaces tab and click to Enable the Camera and I2C. This is required for having the microcomputer work with the camera and IR LED modules.

- Rename Supplementary File 1 to “Pi_video_camera_Clemensson_2019.py”. Copy it onto a USB memory stick, and subsequently into the microcomputer’s /home/pi folder (Figure 3D). This file is a Python script, which enables video recordings to be made with the button switches that are attached in step 1.13.

- Follow the steps outlined below to edit the microcomputer’s rc.local file. This makes the computer start the script copied in step 1.10 and start the IR LEDs attached in step 1.13 when it boots.

CAUTION: This auto-start feature does not reliably work with microcomputer boards other than the listed model.- Open a terminal window, type “sudo nano /etc/rc.local” (excluding quotation marks) and press enter. This opens a text file (Figure 4A).

- Use the keyboard’s arrow keys to move the cursor down to the space between “fi” and “exit 0” (Figure 4A).

- Add the following text as shown in Figure 4B, writing each string of text on a new line:

sudo i2cset -y 1 0x70 0x00 0xa5 &

sudo i2cset -y 1 0x70 0x09 0x0f &

sudo i2cset -y 1 0x70 0x01 0x32 &

sudo i2cset -y 1 0x70 0x03 0x32 &

sudo i2cset -y 1 0x70 0x06 0x32 &

sudo i2cset -y 1 0x70 0x08 0x32 &

sudo python /home/pi/Pi_video_camera_Clemensson_2019.py & - Save the changes by pressing Ctrl + x followed by y and Enter.

- Solder together the necessary components as indicated in Figure 5A, and as described below.

- For the two colored LEDs, attach a resistor and a female jumper cable to one leg, and a female jumper cable to the other (Figure 5A). Try to keep the cables as short as possible. Take note of which of the LED’s electrodes is the negative one (typically the short one), as this needs to be connected to ground on the microcomputer’s general-purpose input/output (GPIO) pins.

- For the two button switches, attach a female jumper cable to each leg (Figure 5A). Make the cables long for one of the switches, and short for the other.

- To assemble the IR LED module, follow instructions available on its official web resources19.

- Cover the soldered joints with shrink tubing to limit the risk of short-circuiting the components.

- Switch off the microcomputer and connect the switches and LEDs to its GPIO pins as indicated in Figure 5B, and described below.

CAUTION: Wiring the components to the wrong GPIO pins could damage them and/or the microcomputer when the camera is switched on.- Connect one LED so that its negative end connects to pin #14 and its positive end connects to pin #12. This LED will shine when the microcomputer has booted and the camera is ready to be used.

- Connect the button switch with long cables so that one cable connects to pin #9 and the other one to pin #11. This button is used to start and stop the video recordings.

NOTE: The script that controls the camera has been written so that this button is unresponsive for a few seconds just after starting or stopping a video recording. - Connect one LED so that its negative end connects to pin #20 and its positive end connects to pin #13. This LED will shine when the camera is recording a video.

- Connect the button switch with the short cables so that one cable connects to pin #37 and the other one to pin #39. This switch is used to switch off the camera.

- Connect the IR LED module as described in its official web resources19.

2. Designing the operant conditioning protocol of interest

NOTE: To use DeepLabCut for tracking the protocol progression in videos recorded from operant chambers, the behavioral protocols need to be structured in specific ways, as explained below.

- Set the protocol to use the chamber’s house light, or another strong light signal, as an indicator of a specific step in the protocol (such as the start of individual trials, or the test session) (Figure 6A). This signal will be referred to as the “protocol step indicator” in the remainder of this protocol. The presence of this signal will allow tracking protocol progression in the recorded videos.

- Set the protocol to record all responses of interest with individual timestamps in relation to when the protocol step indicator becomes active.

3. Recording videos of animals performing the behavioral test of interest

- Place the camera on top of the operant chambers, so that it records a top view of the area inside (Figure 7).

NOTE: This is particularly suitable for capturing an animals’ general position and posture inside the chamber. Avoid placing the camera’s indicator lights and the IR LED module close to the camera lens. - Start the camera by connecting it to an electrical outlet via the power supply cable.

NOTE: Prior to first use, it is beneficial to set the focus of the camera, using the small tool that accompanies the camera module. - Use the button connected in step 1.13.2 to start and stop video recordings.

- Switch off the camera by following these steps.

- Push and hold the button connected in step 1.13.4 until the LED connected in step 1.13.1 switches off. This initiates the camera’s shut down process.

- Wait until the green LED visible on top of the microcomputer (Figure 1) has stopped blinking.

- Remove the camera’s power supply.

CAUTION: Unplugging the power supply while the microcomputer is still running can cause corruption of the data on the micro SD card.

- Connect the camera to a monitor, keyboard, mouse and USB storage device and retrieve the video files from its desktop.

NOTE: The files are named according to the date and time when video recording was started. However, the microcomputer does not have an internal clock and only updates its time setting when connected to the internet. - Convert the recorded videos from .h264 to .MP4, as the latter works well with DeepLabCut and most media players.

NOTE: There are multiple ways to achieve this. One is described in Supplementary File 2.

4. Analyzing videos with DeepLabCut

NOTE: DeepLabCut is a software package that allows users to define any object of interest in a set of video frames, and subsequently use these to train a neural network in tracking the objects’ positions in full-length videos15,16. This section gives a rough outline for how to use DeepLabCut to track the status of the protocol step indicator and the position of a rat’s head. Installation and use of DeepLabCut is well-described in other published protocols15,16. Each step can be done through specific Python commands or DeepLabCut’s graphic user interface, as described elsewhere15,16.

- Create and configure a new DeepLabCut project by following the steps outlined in16.

- Use DeepLabCut’s frame grabbing function to extract 700‒900 video frames from one or more of the videos recorded in section 3.

NOTE: If the animals differ considerably in fur pigmentation or other visual characteristics, it is advisable that the 700‒900 extracted video frames are split across videos of different animals. Through this, one trained network can be used to track different individuals.- Make sure to include video frames that display both the active (Figure 8A) and inactive (Figure 8B) state of the protocol step indicator.

- Make sure to include video frames that cover the range of different positions, postures and head movements that the rat may show during the test. This should include video frames where the rat is standing still in different areas of the chamber, with its head pointing in different directions, as well as video frames where the rat is actively moving, entering nose poke openings and entering the pellet trough.

- Use DeepLabCut’s Labeling Toolbox to manually mark the position of the rat’s head in each video frame extracted in step 4.2. Use the mouse cursor to place a “head” label in a central position between the rat’s ears (Figure 8A,B). In addition, mark the position of the chamber’s house light (or other protocol step indicator) in each video frame where it is actively shining (Figure 8A). Leave the house light unlabeled in frames where it is inactive (Figure 8B).

- Use DeepLabCut’s “create training data set” and “train network” functions to create a training data set from the video frames labeled in step 4.3 and start the training of a neural network. Make sure to select “resnet_101” for the chosen network type.

- Stop the training of the network when the training loss has plateaued below 0.01. This may take up to 500,000 training iterations.

NOTE: When using a GPU machine with approximately 8 GB of memory and a training set of about 900 video frames (resolution: 1640 x 1232 pixels), the training process has been found to take approximately 72 h. - Use DeepLabCut’s video analysis function to analyze videos gathered in step 3, using the neural network trained in step 4.4. This will provide a .csv file listing the tracked positions of the rat’s head and the protocol step indicator in each video frame of the analyzed videos. In addition, it will create marked-up video files where the tracked positions are displayed visually (Videos 1-8).

- Evaluate the accuracy of the tracking by following the steps outlined below.

- Use DeepLabCut’s built-in evaluate function to obtain an automated evaluation of the network’s tracking accuracy. This is based on the video frames that were labeled in step 4.3 and describes how far away on average the position tracked by the network is from the manually placed label.

- Select one or more brief video sequences (of about 100‒200 video frames each) in the marked-up videos obtained in step 4.6. Go through the video sequences, frame by frame, and note in how many frames the labels correctly indicate the positions of the rat’s head, tail, etc., and in how many frames the labels are placed in erroneous positions or not shown.

- If the label of a body part or object is frequently lost or placed in an erroneous position, identify the situations where tracking fails. Extract and add labeled frames of these occasions by repeating steps 4.2. and 4.3. Then retrain the network and reanalyze the videos by repeating steps 4.4-4.7. Ultimately, tracking accuracy of >90% accuracy should be achieved.

5. Obtaining coordinates for points of interest in the operant chambers

- Use DeepLabCut as described in step 4.3 to manually mark points of interest in the operant chambers (such as nose poke openings, levers, etc.) in a single video frame (Figure 8C). These are manually chosen depending on study-specific interests, although the position of the protocol step indicator should always be included.

- Retrieve the coordinates of the marked points of interest from the .csv file that DeepLabCut automatically stores under “labelled data” in the project folder.

6. Identifying video segments where the protocol step indicator is active

- Load the .csv files obtained from the DeepLabCut video analysis in step 4.6 into a data management software of choice.

NOTE: Due to the amount and complexity of data obtained from DeepLabCut and operant conditioning systems, the data management is best done through automated analysis scripts. To get started with this, please refer to entry-level guides available elsewhere20,21,22. - Note in which video segments the protocol step indicator is tracked within 60 pixels of the position obtained in section 5. These will be periods where the protocol step indicator is active (Figure 6B).

NOTE: During video segments where the protocol step indicator is not shining, the marked-up video might seem to indicate that DeepLabCut is not tracking it to any position. However, this is rarely the case, and it is instead typically tracked to multiple scattered locations. - Extract the exact starting point for each period where the protocol step indicator is active (Figure 6C: 1).

7. Identifying video segments of interest

- Consider the points where the protocol step indicator becomes active (Figure 6C: 1) and the timestamps of responses recorded by the operant chambers (section 2, Figure 6C: 2).

- Use this information to determine which video segments cover specific interesting events, such as inter-trial intervals, responses, reward retrievals etc. (Figure 6C: 3, Figure 6D).

NOTE: For this, keep in mind that the camera described herein records videos at 30 fps. - Note the specific video frames that cover these events of interest.

- (Optional) Edit video files of full test sessions to include only the specific segments of interest.

NOTE: There are multiple ways to achieve this. One is described in Supplementary File 2 and 3. This greatly helps when storing large numbers of videos and can also make reviewing and presenting results more convenient.

8. Analyzing the position and movements of an animal during specific video segments

- Subset the full tracking data of head position obtained from DeepLabCut in step 4.6 to only include video segments noted under section 7.

- Calculate the position of the animal’s head in relation to one or more of the reference points selected under section 5 (Figure 8C). This enables comparisons of tracking and position across different videos.

- Perform relevant in-depth analysis of the animal’s position and movements.

NOTE: The specific analysis performed will be strongly study-specific. Some examples of parameters that can be analyzed are given below.- Visualize path traces by plotting all coordinates detected during a selected period within one graph.

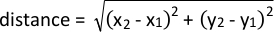

- Analyze proximity to a given point of interest by using the following formula:

- Analyze changes in speed during a movement by calculating the distance between tracked coordinates in consecutive frames and divide by 1/fps of the camera.

Results

Video camera performance

The representative results were gathered in operant conditioning chambers for rats with floor areas of 28.5 cm x 25.5 cm, and heights of 28.5 cm. With the fisheye lens attached, the camera captures the full floor area and large parts of the surrounding walls, when placed above the chamber (Figure 7A). As such, a good view can be obtained, even if the camera is placed off-center on the chamber’s top. This should hold ...

Discussion

This protocol describes how to build an inexpensive and flexible video camera that can be used to record videos from operant conditioning chambers and other behavioral test setups. It further demonstrates how to use DeepLabCut to track a strong light signal within these videos, and how that can be used to aid in identifying brief video segments of interest in video files that cover full test sessions. Finally, it describes how to use the tracking of a rat’s head to complement the analysis of behaviors during operan...

Disclosures

While materials and resources from The Raspberry Pi foundation has been used and cited in this manuscript, the foundation was not actively involved in the preparation or use of equipment and data in this manuscript. The same is true for Pi-Supply. The authors have nothing to disclose.

Acknowledgements

This work was supported by grants from the Swedish Brain Foundation, the Swedish Parkinson Foundation, and the Swedish Government Funds for Clinical Research (M.A.C.), as well as the Wenner-Gren foundations (M.A.C, E.K.H.C), Åhlén foundation (M.A.C) and the foundation Blanceflor Boncompagni Ludovisi, née Bildt (S.F).

Materials

| Name | Company | Catalog Number | Comments |

| 32 Gb micro SD card with New Our Of Box Software (NOOBS) preinstalled | The Pi hut (https://thpihut.com) | 32GB | |

| 330-Ohm resistor | The Pi hut (https://thpihut.com) | 100287 | This article is for a package with mixed resistors, where 330-ohm resistors are included. |

| Camera module (Raspberry Pi NoIR camera v.2) | The Pi hut (https://thpihut.com) | 100004 | |

| Camera ribbon cable (Raspberry Pi Zero camera cable stub) | The Pi hut (https://thpihut.com) | MMP-1294 | This is only needed if a Raspberry Pi zero is used. If another Raspberry Pi board is used, a suitable camera ribbon cable accompanies the camera component |

| Colored LEDs | The Pi hut (https://thpihut.com) | ADA4203 | This article is for a package with mixed colors of LEDs. Any color can be used. |

| Female-Female jumper cables | The Pi hut (https://thpihut.com) | ADA266 | |

| IR LED module (Bright Pi) | Pi Supply (https://uk.pi-supply.com) | PIS-0027 | |

| microcomputer motherboard (Raspberry Pi Zero board with presoldered headers) | The Pi hut (https://thpihut.com) | 102373 | Other Raspberry Pi boards can also be used, although the method for automatically starting the Python script only works with Raspberry Pi zero. If using other models, the python script needs to be started manually. |

| Push button switch | The Pi hut (https://thpihut.com) | ADA367 | |

| Raspberry Pi power supply cable | The Pi hut (https://thpihut.com) | 102032 | |

| Raspberry Pi Zero case | The Pi hut (https://thpihut.com) | 102118 | |

| Raspberry Pi, Mod my pi, camera stand with magnetic fish eye lens and magnetic metal ring attachment | The Pi hut (https://thpihut.com) | MMP-0310-KIT |

References

- Pritchett, K., Mulder, G. B. Operant conditioning. Contemporary Topics in Laboratory Animal Science. 43 (4), (2004).

- Clemensson, E. K. H., Novati, A., Clemensson, L. E., Riess, O., Nguyen, H. P. The BACHD rat model of Huntington disease shows slowed learning in a Go/No-Go-like test of visual discrimination. Behavioural Brain Research. 359, 116-126 (2019).

- Asinof, S. K., Paine, T. A. The 5-choice serial reaction time task: a task of attention and impulse control for rodents. Journal of Visualized Experiments. (90), e51574 (2014).

- Coulbourn instruments. Graphic State: Graphic State 4 user's manual. Coulbourn instruments. , 12-17 (2013).

- Med Associates Inc. Med-PC IV: Med-PC IV programmer's manual. Med Associates Inc. , 21-44 (2006).

- Clemensson, E. K. H., Clemensson, L. E., Riess, O., Nguyen, H. P. The BACHD rat model of Huntingon disease shows signs of fronto-striatal dysfunction in two operant conditioning tests of short-term memory. PloS One. 12 (1), (2017).

- Herremans, A. H. J., Hijzen, T. H., Welborn, P. F. E., Olivier, B., Slangen, J. L. Effect of infusion of cholinergic drugs into the prefrontal cortex area on delayed matching to position performance in the rat. Brain Research. 711 (1-2), 102-111 (1996).

- Chudasama, Y., Muir, J. L. A behavioral analysis of the delayed non-matching to position task: the effects of scopolamine, lesions of the fornix and of the prelimbic region on mediating behaviours by rats. Psychopharmacology. 134 (1), 73-82 (1997).

- Talpos, J. C., McTighe, S. M., Dias, R., Saksida, L. M., Bussey, T. J. Trial-unique, delayed nonmatching-to-location (TUNL): A novel, highly hippocampus-dependent automated touchscreen test of location memory and pattern separation. Neurobiology of Learning and Memory. 94 (3), 341 (2010).

- Rayburn-Reeves, R. M., Moore, M. K., Smith, T. E., Crafton, D. A., Marden, K. L. Spatial midsession reversal learning in rats: Effects of egocentric cue use and memory. Behavioural Processes. 152, 10-17 (2018).

- Gallo, A., Duchatelle, E., Elkhessaimi, A., Le Pape, G., Desportes, J. Topographic analysis of the rat's behavior in the Skinner box. Behavioural Processes. 33 (3), 318-328 (1995).

- Iversen, I. H. Response-initiated imaging of operant behavior using a digital camera. Journal of the Experimental Analysis of Behavior. 77 (3), 283-300 (2002).

- Med Associates Inc. Video monitor: Video monitor SOF-842 user's manual. Med Associates Inc. , 26-30 (2004).

- . Coulbourn Instruments Available from: https://www.coulbourn.com/product_p/h39-16.htm (2020)

- Mathis, A., et al. DeepLabCut: markerless pose estimation of user-defined body parts with deep learning. Nature Neuroscience. 21 (9), 1281-1289 (2018).

- Nath, T., Mathis, A., Chen, A. C., Patel, A., Bethge, M., Mathis, M. W. Using DeepLabCut for 3D markerless pose estimation across species and behaviors. Nature Protocols. 14 (7), 2152-2176 (2019).

- Bari, A., Dalley, J. W., Robbins, T. W. The application of the 5-chopice serial reaction time task for the assessment of visual attentional processes and impulse control in rats. Nature Protocols. 3 (5), 759-767 (2008).

- . Raspberry Pi foundation Available from: https://thepi.io/how-to-install-raspbian-on-the-raspberry-pi/ (2020)

- . Pi-supply Available from: https://learn.pi-supply.com/make/bright-pi-quickstart-faq/ (2018)

- . Python Available from: https://wiki.python.org/moin/BeginnersGuide/NonProgrammers (2020)

- . MathWorks Available from: https://mathworks.com/academia/highschool/ (2020)

- . Cran.R-Project.org Available from: https://cran.r-project.org/manuals.html (2020)

- Liu, Y., Tian, C., Huang, Y. . Critical assessment of correction methods for fisheye lens distortion. The international archives of the photogrammetry, remote sensing and spatial information sciences. , (2016).

- Pereira, T. D., et al. Fast animal pose estimation using deep neural networks. Nature Methods. 16 (1), 117-125 (2019).

- Graving, J. M., et al. DeepPoseKit, a software toolkit for fast and robust animal pose estimation using deep learning. Elife. 8 (47994), (2019).

- Geuther, B. Q., et al. Robust mouse tracking in complex environments using neural networks. Communications Biology. 2 (124), (2019).

Reprints and Permissions

Request permission to reuse the text or figures of this JoVE article

Request PermissionThis article has been published

Video Coming Soon

Copyright © 2025 MyJoVE Corporation. All rights reserved