A subscription to JoVE is required to view this content. Sign in or start your free trial.

Method Article

Eye Tracking During A Complex Aviation Task For Insights Into Information Processing

In This Article

Summary

Eye tracking is a non-invasive method to probe information processing. This article describes how eye tracking can be used to study gaze behavior during a flight simulation emergency task in low-time pilots (i.e., <350 flight hours).

Abstract

Eye tracking has been used extensively as a proxy to gain insight into the cognitive, perceptual, and sensorimotor processes that underlie skill performance. Previous work has shown that traditional and advanced gaze metrics reliably demonstrate robust differences in pilot expertise, cognitive load, fatigue, and even situation awareness (SA).

This study describes the methodology for using a wearable eye tracker and gaze mapping algorithm that captures naturalistic head and eye movements (i.e., gaze) in a high-fidelity flight motionless simulator. The method outlined in this paper describes the area of interest (AOI)-based gaze analyses, which provides more context related to where participants are looking, and dwell time duration, which indicates how efficiently they are processing the fixated information. The protocol illustrates the utility of a wearable eye tracker and computer vision algorithm to assess changes in gaze behavior in response to an unexpected in-flight emergency.

Representative results demonstrated that gaze was significantly impacted when the emergency event was introduced. Specifically, attention allocation, gaze dispersion, and gaze sequence complexity significantly decreased and became highly concentrated on looking outside the front window and at the airspeed gauge during the emergency scenario (all p values < 0.05). The utility and limitations of employing a wearable eye tracker in a high-fidelity motionless flight simulation environment to understand the spatiotemporal characteristics of gaze behavior and its relation to information processing in the aviation domain are discussed.

Introduction

Humans predominantly interact with the world around them by first moving their eyes and head to focus their line of sight (i.e., gaze) toward a specific object or location of interest. This is particularly true in complex environments such as aircraft cockpits where pilots are faced with multiple competing stimuli. Gaze movements enable the collection of high-resolution visual information that allows humans to interact with their environment in a safe and flexible manner1, which is of paramount importance in aviation. Studies have shown that eye movements and gaze behavior provide insight into underlying perceptual, cognitive, and motor processes across various tasks1,2,3. Moreover, where we look has a direct influence on the planning and execution of upper limb movements3. Therefore, gaze behavior analysis during aviation tasks provides an objective and non-invasive method, which could reveal how eye movement patterns relate to various aspects of information processing and performance.

Several studies have demonstrated an association between gaze and task performance across various laboratory paradigms, as well as complex real-world tasks (i.e., operating an aircraft). For instance, task-relevant areas tend to be fixated more frequently and for longer total durations, suggesting that fixation location, frequency, and dwell time are proxies for the allocation of attention in neurocognitive and aviation tasks4,5,6. Highly successful performers and experts show significant fixation biases toward task-critical areas compared to less successful performers or novices4,7,8. Spatiotemporal aspects of gaze are captured through changes in dwell time patterns across various areas of interest (AOIs) or measures of fixation distribution (i.e., Stationary Gaze Entropy: SGE). In the context of laboratory-based paradigms, average fixation duration, scan path length, and gaze sequence complexity (i.e., Gaze Transition Entropy: GTE) tend to increase due to the increased scanning and processing required to problem-solve and elaborate on more challenging task goals/solutions4,7.

Conversely, aviation studies have demonstrated that scan path length and gaze sequence complexity decrease with task complexity and cognitive load. This discrepancy highlights the fact that understanding the task components and the demands of the paradigm being employed is critical for the accurate interpretation of gaze metrics. Altogether, research to date supports that gaze measures provide meaningful, objective insight into task-specific information processing that underlies the differences in task difficulty, cognitive load, and task performance. With advances in eye tracking technology (i.e., portability, calibration, and cost), examining gaze behavior in 'the wild' is an emerging area of research with tangible applications toward advancing occupational training in the fields of medicine9,10,11 and aviation12,13,14.

The current work aims to further examine the utility of using gaze-based metrics to gain insight into information processing by specifically employing a wearable eye tracker during an emergency flight simulation task in low-time pilots. This study expands on previous work that used a head-stabilized eye tracker (i.e., EyeLink II) to examine differences in gaze behavior metrics as a function of flight difficulty (i.e., changes in weather conditions)5. The work presented in this manuscript also extends on other work which described the methodological and analytical approaches for using eye tracking in a virtual reality system15. Our study used a higher fidelity motionless simulator and reports additional analysis of eye movement data (i.e., entropy). This type of analysis has been reported in previous papers; however, a limitation in the current literature is the lack of standardization in reporting the analytical steps. For example, reporting how areas of interest are defined is of critical importance because it directly influences the resultant entropy values16.

To summarize, the current work examined traditional and dynamic gaze behavior metrics while task difficulty was manipulated via the introduction of an in-flight emergency scenario (i.e., unexpected total engine failure). It was expected that the introduction of an in-flight emergency scenario would provide insight into gaze behavior changes underlying information processing during more challenging task conditions. The study reported here is part of a larger study examining the utility of eye tracking in a flight simulator to inform competency-based pilot training. The results presented here have not been previously published.

Access restricted. Please log in or start a trial to view this content.

Protocol

The following protocol can be applied to studies involving a wearable eye tracker and a flight simulator. The current study involves eye-tracking data recorded alongside complex aviation-related tasks in a flight simulator (see Table of Materials). The simulator was configured to be representative of a Cessna 172 and was used with the necessary instrument panel (steam gauge configuration), an avionics/GPS system, an audio/lights panel, a breaker panel, and a Flight Control Unit (FCU) (see Figure 1). The flight simulator device used in this study is certifiable for training purposes and used by the local flight school to train the skillsets required to respond to various emergency scenarios, such as engine failure, in a low-risk environment. Participants in this study were all licensed; therefore, they experienced the engine failure simulator scenario previously in the course of their training. This study was approved by the University of Waterloo's Office of Research Ethics (43564; Date: Nov 17, 2021). All participants (N = 24; 14 males, 10 females; mean age = 22 years; flight hours range: 51-280 h) provided written informed consent.

Figure 1: Flight simulator environment. An illustration of the flight simulator environment. The participant’s point of view of the cockpit replicated that of a pilot flying a Cessna 172, preset for a downwind-to-base-to-final approach to Waterloo International Airport, Breslau, Ontario, CA. The orange boxes represent the ten main areas of interest used in the gaze analyses. These include the (1) airspeed, (2) attitude, (3) altimeter, (4) turn coordinator, (5) heading, (6) vertical speed, and (7) power indicators, as well as the (8) front, (9) left, and (10) right windows. This figure was modified from Ayala et al.5. Please click here to view a larger version of this figure.

1. Participant screening and informed consent

- Screen the participant via a self-report questionnaire based on inclusion/exclusion criteria2,5: possession of at least a Private Pilot License (PPL), normal or corrected-to-normal vision, and no previous diagnosis with a neuropsychiatric/neurological disorder or learning disability.

- Inform the participant about the study objectives and procedures through a detailed briefing handled by the experimenter and the supervising flight instructor/simulator technician. Review the risks outlined in the institution's ethics review board-approved consent document. Answer any questions about the potential risks. Obtain written informed consent before beginning any study procedures.

2. Hardware/software requirements and start-up

- Flight simulator (typically completed by the simulator technician)

- Turn the simulator and projector screens on. If one of the projectors does not turn on at the same time as the others, restart the simulator.

- On the Instruction Screen, press the Presets tab and verify that the required Position and/or Weather presets are available. If needed, create a new type of preset; consult the technician for help.

- Collection laptop

- Turn the laptop and log in with credentials.

- When prompted, either select a preexisting profile or make one if testing a new participant. Alternatively, select the Guest option to overwrite its last calibration.

- To create a new profile, scroll to the end of the profile list and click Add.

- Set the profile ID to the participant ID. This profile ID will be used to tag the folder, which holds eye tracking data after a recording is complete.

- Glasses calibration

NOTE: The glasses must stay connected to the laptop to record. Calibration with the box only needs to be completed once at the start of data collection.- Open the eye tracker case and take out the glasses.

- Connect the USB to micro-USB cable from the laptop to the glasses. If prompted on the laptop, update the firmware.

- Locate the black calibration box inside the eye tracker case.

- On the collection laptop, in the eye tracking Hub, choose Tools | Device Calibration.

- Place the glasses inside the box and press Start on the popup window to begin calibration.

- Remove the glasses from the box once calibration is complete.

- Nosepiece fit

- Select the nosepiece.

- Instruct the participant to sit in the cockpit and put on the glasses.

- In eye tracking Hub, navigate to File | Settings | Nose Wizard.

- Check the adjust fit of your glasses box on the left side of the screen. If the fit is Excellent, proceed to the next step. Otherwise, click the box.

- Tell the participant to follow the fit recommendation instructions shown onscreen: set the nosepiece, adjust the glasses to sit comfortably, and look straight ahead at the laptop.

- If required, swap out the nosepiece. Pinch the nosepiece in its middle, slide it out from the glasses, and then slide another one in. Continue to test the different nosepieces until the one that fits the participant the best is identified.

- Air Traffic Control (ATC) calls

NOTE: If the study requires ATC calls, have the participant bring in their own headset or use the lab headset. Complete eyeball calibration only after the participant puts on the headset as the headset can move the glasses on the head, which affects calibration accuracy.- Check that the headset is hooked up to the jack on the left underside of the instrument panel.

- Instruct the participant to put the headset on. Ask them not to touch it or take it off until the recording is finished.

NOTE: Re-calibration is required each time the headset (and, therefore, the glasses) is moved. - Do a radio check.

- Eyeball calibration

NOTE: Whenever the participant shifts the glasses on their head, they must repeat eyeball calibration. Ask the participant not to touch the glasses until their trials are over.- In eye tracking Hub, navigate to the parameter box at the left of the screen.

- Check the calibration mode and choose fixed gaze or fixed head accordingly.

- Check that the calibration points are a 5 x 5 grid, for 25 points total.

- Check the validation mode and make sure it matches the calibration mode.

- Examine the eye tracking outputs and verify that everything that needs to be recorded for the study is checked using the tick boxes.

- Click File | Settings | Advanced and check that the sampling rate is 250 Hz.

- Check the Calibrate your eye tracking box on the screen using the mouse. The calibration instructions will vary based on the mode. To follow the current study, use the fixed gaze calibration mode: instruct the participants to move their head so that the box overlaps with the black square and they align. Then, ask the participant to focus their gaze on the crosshair in the black square and press the space bar.

- Press the Validate your setup box. The instructions will be the same as in step 2.6.3. Check that the validation figure MAE (Mean Absolute Error) is <1°. If not, then repeat steps 2.6.3 and 2.6.4.

- Press Save Calibration to save the calibration to the profile each time calibration and validation are completed.

- In eye tracking Hub, navigate to the parameter box at the left of the screen.

- iPad usage

NOTE: The iPad is located to the left of the instrument panel (see Figure 1). It is used for questionnaires typically after flight.- Turn the iPad on and make sure it is connected to the Internet.

- Open a window in Safari and enter the link for the study questionnaire.

3. Data collection

NOTE: Repeat these steps for each trial. It is recommended that the laptop is placed on the bench outside the cockpit.

- On the flight simulator computer, in the instruction screen, press Presets, and then choose the desired Position preset to be simulated. Press the Apply button and watch the screens surrounding the simulator to verify that the change happens.

- Repeat step 3.1 to apply the Weather preset.

- Give the participant any specific instructions about the trial or their flight path. This includes telling them to change any settings on the instrument panel before they begin.

- In the instruction screen, press the orange STOPPED button to start data collection. The color will change to green, and the text will say FLYING. Be sure to give a verbal cue to the participant so that they know they can start flying the aircraft. The recommended cue is "3, 2, 1, you have controls" as the orange stop button is pressed.

- In the collection laptop, press Start Recording so that the eye tracker data are synced with the flight simulator data.

- When the participant has completed their circuit and landed, wait for the aircraft to stop moving.

NOTE: It is important to wait because during postprocessing; data are truncated when the groundspeed settles at 0. This gives consistency for the endpoint of all trials. - In the instruction screen, press the green FLYING button. The color will return to orange and the text will say STOPPED. Give a verbal cue during this step when data collection is about to end. The recommended cue is "3, 2, 1, stop".

- Instruct the participant to complete the posttrial questionnaire(s) on the iPad. Refresh the page for the next trial.

NOTE: The current study used the Situation Awareness Rating Technique (SART) self-rating questionnaire as the only posttrial questionnaire17.

4. Data processing and analysis

- Flight simulator data

NOTE: The .csv file copied from the flight simulator contains more than 1,000 parameters that can be controlled in the simulator. The main performance measures of interest are listed and described in Table 1.- For each participant, calculate the success rate using Eq (1) by taking the percentage across the task conditions. Failed trials are identified by predetermined criteria programmed within the simulator that terminates the trial automatically on touchdown due to plane orientation and vertical speed. Carry out posttrial verification to ensure that this criterion was aligned with actual aircraft limitations (i.e., Cessna 172 landing gear damage/crash is evident at vertical speeds > 700 feet/min [fpm] upon touchdown).

Success rate = (1)

(1)

NOTE: Lower success rate values indicate worse outcomes as they are associated with a reduction in successful landing attempts. - For each trial, calculate completion time based on the timestamp, which indicates that the plane stopped on the runway (i.e., GroundSpeed = 0 knots).

NOTE: A shorter completion time may not always equate to better performance. Caution must be taken to understand how the task conditions (i.e., additional winds, emergency scenarios, etc.) are expected to impact completion time. - For each trial, determine landing hardness based on aircraft vertical speed (fpm) at the time the aircraft initially touches down on the runway. Ensure that this value is taken at the same timestamp associated with the first change in AircraftOnGround status from 0 (in air) to 1 (on ground).

NOTE: Values within the range of -700 fpm to 0 fpm are considered safe, with values closer to 0 representing softer landings (i.e., better). Negative values represent downward vertical speed; positive values represent upward vertical speed. - For each trial, calculate the landing error (°) based on the difference between the touchdown coordinates and the reference point on the runway (center of the 500 ft markers). Using the reference point, calculate the landing error using Eq (2).

Difference = √((Δ Latitude)2 + (Δ Longitude)2) (2)

NOTE: Values below 1° are shown to be normal5,15. Large values indicate a larger landing error associated with aircraft touchdown points that are farther away from the landing zone. - Calculate the means across all participants for each performance outcome variable for each task condition. Report these values.

- For each participant, calculate the success rate using Eq (1) by taking the percentage across the task conditions. Failed trials are identified by predetermined criteria programmed within the simulator that terminates the trial automatically on touchdown due to plane orientation and vertical speed. Carry out posttrial verification to ensure that this criterion was aligned with actual aircraft limitations (i.e., Cessna 172 landing gear damage/crash is evident at vertical speeds > 700 feet/min [fpm] upon touchdown).

- Situation awareness data

- For each trial, calculate the SA score based on the self-reported SART scores across the 10 dimensions of SA17.

- Use the SART questionnaire17 to determine the participants' subjective responses regarding the overall task difficulty, as well as their impression of how much attentional resources they had available and spent during task performance.

- Using a 7 point Likert scale, ask the participants to rate their perceived experience on probing questions, including the complexity of the situation, division of attention, spare mental capacity, and information quantity and quality.

- Combine these scales into larger dimensions of attentional demands (Demand), attentional supply (Supply), and situation understanding (Understanding).

- Use these ratings to calculate a measure of SA based on Eq (3):

SA = Understanding - (Demand-Supply) (3)

NOTE: Higher scores on scales combined to provide a measure of understanding suggest the participant has a good understanding of the task at hand. Similarly, high scores in the supply domain suggest that the participant has a significant amount of attentional resources to devote toward a given task. In contrast, a high demand score suggests that the task requires a significant amount of attentional resources to complete. It is important to clarify that these scores are best interpreted when compared across conditions (i.e., easy vs difficult conditions) instead of used as stand-alone measures.

- Once data collection is completed, calculate the means across all participants for each task condition (i.e., basic, emergency). Report these values.

- For each trial, calculate the SA score based on the self-reported SART scores across the 10 dimensions of SA17.

- Eye tracking data

- Use an eye tracking batch script for manually defining AOIs for use in gaze mapping. The script will open a new window for key frame selection that should clearly display all key AOIs that will be analyzed. Scroll through the video and choose a frame that shows all the AOIs clearly.

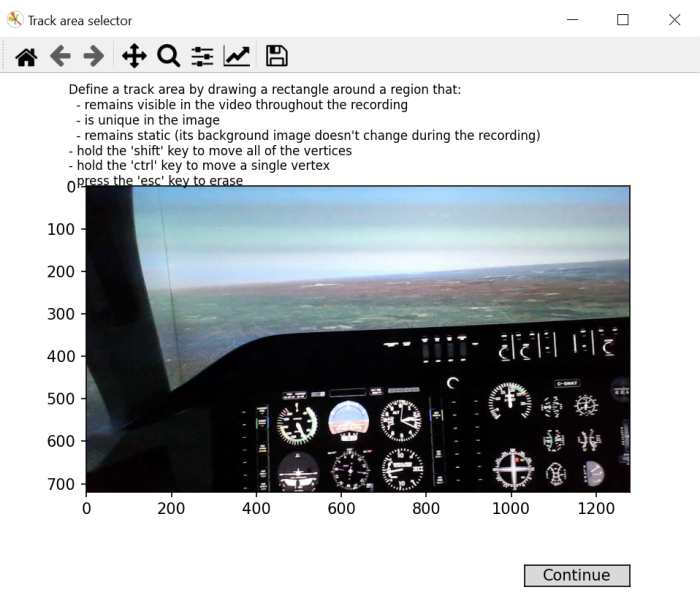

- Following the instructions onscreen, draw a rectangle over a region of the frame that will be visible throughout the whole video, unique, and remain stable.

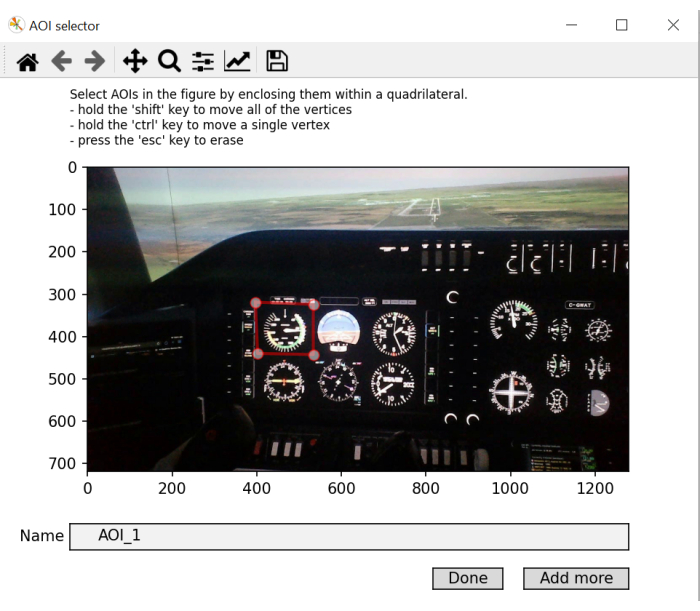

NOTE: The purpose of this step is to generate an "in-screen" coordinate frame that can be used across the video recording since head movements result in the location of objects in the environment changing over the course of the video recording. - Draw a rectangle for each AOI in the picture, one at a time. Name them accordingly. Click Add more to add a new AOI and press Done on the last one. If the gaze coordinates during a given fixation land within the object space as defined in the "in-screen" coordinate frame, label that fixation with the respective AOI label.

NOTE: The purpose of this step is to generate a library of object coordinates that are then used as references when comparing gaze coordinates to in-screen coordinates.

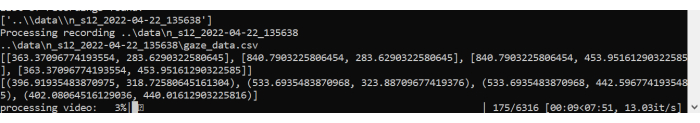

There are typically 10 AOIs, but this depends on how the flight simulator is configured. The instrument panel may be different. In line with previous work5,18, the current study uses the following AOIs: Airspeed, Attitude, Altimeter, Turn Coordinator, Heading, Vertical Speed, Power, Front Window, Left Window, and Right Window (see Figure 1). - Let the script start processing the AOIs and generate fixation data. It generates a plot showing the saccades and fixations over the video.

- Two new files will be created: fixations.csv and aoi_parameters.yaml. The batch processor will complete the postprocessing of gaze data for each trial and each participant.

NOTE: The main eye tracking measures of interest are listed in Table 2 and are calculated for each AOI for each trial. - For each trial, calculate the traditional gaze metrics4,5 for each AOI based on the data generated in the fixation.csv file.

NOTE: Here, we focus on dwell time (%), which is calculated by dividing the sum of fixations for a particular AOI by the sum of all fixations and multiplying the quotient by 100 to get the percentage of time spent in a specific AOI. There is no inherent negative/positive interpretation from the calculated dwell times. They give an indication of where attention is predominantly being allocated. Longer average fixation durations are indicative of increased processing demands. - For each trial, calculate the blink rate using Eq (4):

Blink rate = Total blinks/completion time (4)

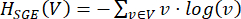

NOTE: Previous work has shown that blink rate is inversely related to cognitive load2,6,13,19,20. - For each trial, calculate the SGE using eq (5)21:

(5)

(5)

Where v is the probability of viewing the ith AOI and V is the number of AOIs.

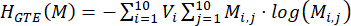

NOTE: Higher SGE values are associated with a larger fixation dispersion, whereas lower values are indicative of a more focalized allocation of fixations22. - For each trial, calculate the GTE using eq (6)23:

(6)

(6)

Where V is the probability of viewing the ith AOI, and M is the probability of viewing the jth AOI given the previous viewing of the ith AOI.

NOTE: Higher GTE values are associated with more unpredictable, complex visual scan paths, whereas lower GTE values are indicative of more predictable, routine visual scan paths. - Calculate the means across all participants for each eye tracking output variable (and AOI when indicated) and each task condition. Report these values.

| Term | Definition |

| Success (%) | Percentage of successful landing trials |

| Completion time (s) | Duration of time from the start of the landing scenario to the plane coming to a complete stop on the runway |

| Landing Hardness (fpm) | The rate of decent at point of touchdown |

| Landing Error (°) | The difference between the center of the plane and the center of the 500 ft runway marker at point of touchdown |

Table 1: Simulator performance outcome variables. Aircraft performance-dependent variables and their definitions.

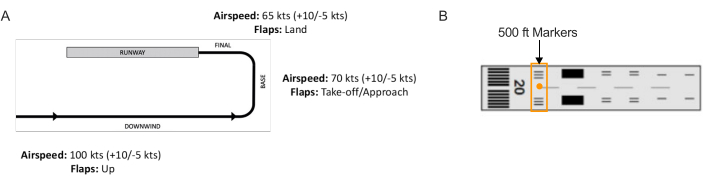

Figure 2: Landing scenario flight path. Schematic of (A) the landing circuit completed in all trials and (B) the runway with the 500 ft markers that were used as the reference point for the landing zone (i.e., center orange circle). Please click here to view a larger version of this figure.

Figure 3: Area of Interest mapping. An illustration of the batch script demonstrating a window for frame selection. The selection of an optimal frame involves choosing a video frame that includes most or all areas of interest to be mapped. Please click here to view a larger version of this figure.

Figure 4: Generating Area of Interest mapping “in-screen” coordinates. An illustration of the batch script demonstrating a window for “in-screen” coordinates selection. This step involves the selection of a square/rectangular region that remains visible throughout the recording, is unique to the image, and remains static. Please click here to view a larger version of this figure.

Figure 5: Identifying Area of Interest to be mapped. An illustration of the batch script window that allows for the selection and labelling of areas of interest. Abbreviation: AOIs = areas of interest. Please click here to view a larger version of this figure.

Figure 6: Batch script processing. An illustration of the batch script processing the video and gaze mapping the fixations made throughout the trial. Please click here to view a larger version of this figure.

| Term | Definition |

| Dwell time (%) | Percentage of the sum of all fixation durations accumulated over one AOI relative to the sum of fixation durations accumulated over all AOIs |

| Average fixation duration (ms) | Average duration of a fixation over one AOI from entry to exit |

| Blink rate (blinks/s) | Number of blinks per second |

| SGE (bits) | Fixation dispersion |

| GTE (bits) | Scanning sequence complexity |

| Number of Bouts | Number of cognitive tunneling events (>10 s) |

| Total Bout Time (s) | Total time of cognitive tunneling events |

Table 2: Eye tracking outcome variables. Gaze behavior-dependent variables and their definitions.

Access restricted. Please log in or start a trial to view this content.

Results

The impact of task demands on flight performance

The data were analyzed based on successful landing trials across basic and emergency conditions. All measures were subjected to a paired-samples' t-test (within-subject factor: task condition (basic, emergency)). All t-tests were performed with an alpha level set at 0.05. Four participants crashed during the emergency scenario trial and were not included in the main analyses because the sparse data does not allow meaningful conc...

Access restricted. Please log in or start a trial to view this content.

Discussion

The eye tracking method described here enables the assessment of information processing in a flight simulator environment via a wearable eye tracker. Assessing the spatial and temporal characteristics of gaze behavior provides insight into human information processing, which has been studied extensively using highly controlled laboratory paradigms4,7,28. Harnessing recent advances in technology allows the generalization of eye t...

Access restricted. Please log in or start a trial to view this content.

Disclosures

No competing financial interests exist.

Acknowledgements

This work is supported in part by the Canadian Graduate Scholarship (CGS) from the Natural Sciences and Engineering Research Council (NSERC) of Canada, and the Exploration Grant (00753) from the New Frontiers in Research Fund. Any opinions, findings, conclusions, or recommendations expressed in this material are of the author(s) and do not necessarily reflect those of the sponsors.

Access restricted. Please log in or start a trial to view this content.

Materials

| Name | Company | Catalog Number | Comments |

| flight simulator | ALSIM | AL-250 | fixed fully immersive flight simulation training device |

| laptop | Hp | Lenovo | eye tracking data collection laptop; requirements: Windows 10 and python 3.0 |

| portable eye-tracker | AdHawk | MindLink eye tracking glasses (250 Hz, <2° gaze error, front-facing camera); eye tracking batch script is made available with AdHawk device purchase |

References

- de Brouwer, A. J., Flanagan, J. R., Spering, M. Functional use of eye movements for an acting system. Trends Cogn Sci. 25 (3), 252-263 (2021).

- Ayala, N., Kearns, S., Cao, S., Irving, E., Niechwiej-Szwedo, E. Investigating the role of flight phase and task difficulty on low-time pilot performance, gaze dynamics and subjective situation awareness during simulated flight. J Eye Mov Res. 17 (1), (2024).

- Land, M. F., Hayhoe, M. In what ways do eye movements contribute to everyday activities. Vision Res. 41 (25-26), 3559-3565 (2001).

- Ayala, N., Zafar, A., Niechwiej-Szwedo, E. Gaze behavior: a window into distinct cognitive processes revealed by the Tower of London test. Vision Res. 199, 108072(2022).

- Ayala, N. The effects of task difficulty on gaze behavior during landing with visual flight rules in low-time pilots. J Eye Mov Res. 16, 10(2023).

- Glaholt, M. G. Eye tracking in the cockpit: a review of the relationships between eye movements and the aviators cognitive state. , (2014).

- Hodgson, T. L., Bajwa, A., Owen, A. M., Kennard, C. The strategic control of gaze direction in the Tower-of-London task. J Cognitive Neurosci. 12 (5), 894-907 (2000).

- van De Merwe, K., Van Dijk, H., Zon, R. Eye movements as an indicator of situation awareness in a flight simulator experiment. Int J Aviat Psychol. 22 (1), 78-95 (2012).

- Kok, E. M., Jarodzka, H. Before your very eyes: The value and limitations of eye tracking in medical education. Med Educ. 51 (1), 114-122 (2017).

- Di Stasi, L. L., et al. Gaze entropy reflects surgical task load. Surg Endosc. 30, 5034-5043 (2016).

- Laubrock, J., Krutz, A., Nübel, J., Spethmann, S. Gaze patterns reflect and predict expertise in dynamic echocardiographic imaging. J Med Imag. 10 (S1), S11906-S11906 (2023).

- Brams, S., et al. Does effective gaze behavior lead to enhanced performance in a complex error-detection cockpit task. PloS One. 13 (11), e0207439(2018).

- Peißl, S., Wickens, C. D., Baruah, R. Eye-tracking measures in aviation: A selective literature review. Int J Aero Psych. 28 (3-4), 98-112 (2018).

- Ziv, G. Gaze behavior and visual attention: A review of eye tracking studies in aviation. Int J Aviat Psychol. 26 (3-4), 75-104 (2016).

- Ke, L., et al. Evaluating flight performance and eye movement patterns using virtual reality flight simulator. J. Vis. Exp. (195), e65170(2023).

- Krejtz, K., et al. Gaze transition entropy. ACM Transactions on Applied Perception. 13 (1), 1-20 (2015).

- Taylor, R. M., Selcon, S. J. Cognitive quality and situational awareness with advanced aircraft attitude displays. Proceedings of the Human Factors Society Annual Meeting. 34 (1), 26-30 (1990).

- Ayala, N., et al. Does fiducial marker visibility impact task performance and information processing in novice and low-time pilots. Computers & Graphics. 199, 103889(2024).

- Recarte, M. Á, Pérez, E., Conchillo, Á, Nunes, L. M. Mental workload and visual impairment: Differences between pupil, blink, and subjective rating. Spanish J Psych. 11 (2), 374-385 (2008).

- Zheng, B., et al. Workload assessment of surgeons: correlation between NASA TLX and blinks. Surg Endosc. 26, 2746-2750 (2012).

- Shannon, C. E. A mathematical theory of communication. The Bell System Technical Journal. 27 (3), 379-423 (1948).

- Shiferaw, B., Downey, L., Crewther, D. A review of gaze entropy as a measure of visual scanning efficiency. Neurosci Biobehav R. 96, 353-366 (2019).

- Ciuperca, G., Girardin, V. Estimation of the entropy rate of a countable Markov chain. Commun Stat-Theory and Methods. 36 (14), 2543-2557 (2007).

- Federal aviation administration. Airplane flying handbook. , Federal Aviation Administration. Washington, DC. FAA-H-8083-3C (2021).

- Brown, D. L., Vitense, H. S., Wetzel, P. A., Anderson, G. M. Instrument scan strategies of F-117A Pilots. Aviat, Space, Envir Med. 73 (10), 1007-1013 (2002).

- Lu, T., Lou, Z., Shao, F., Li, Y., You, X. Attention and entropy in simulated flight with varying cognitive loads. Aerosp Medicine Hum Perf. 91 (6), 489-495 (2020).

- "Automation surprise" in aviation: Real-time solutions. Dehais, F., Peysakhovich, V., Scannella, S., Fongue, J., Gateau, T. Proceedings of the 33rd Annual ACM conference on Human Factors in Computing Systems, , 2525-2534 (2015).

- Kowler, E. Eye movements: The past 25 years. J Vis Res. 51 (13), 1457-1483 (2011).

- Zafar, A., et al. Investigation of camera-free eye-tracking glasses compared to a video-based system. Sensors. 23 (18), 7753(2023).

- Leube, A., Rifai, K. Sampling rate influences saccade detection in mobile eye tracking of a reading task. J Eye Mov Res. 10 (3), (2017).

- Diaz-Piedra, C., et al. The effects of flight complexity on gaze entropy: An experimental study with fighter pilots. Appl Ergon. 77, 92-99 (2019).

- Shiferaw, B. A., et al. Stationary gaze entropy predicts lane departure events in sleep-deprived drivers. Sci Rep. 8 (1), 1-10 (2018).

- Parker, A. J., Kirkby, J. A., Slattery, T. J. Undersweep fixations during reading in adults and children. J Exp Child Psychol. 192, 104788(2020).

- Ayala, N., Kearns, S., Irving, E., Cao, S., Niechwiej-Szwedo, E. The effects of a dual task on gaze behavior examined during a simulated flight in low-time pilots. Front Psychol. 15, 1439401(2024).

- Ayala, N., Heath, M. Executive dysfunction after a sport-related concussion is independent of task-based symptom burden. J Neurotraum. 37 (23), 2558-2568 (2020).

- Huddy, V. C., et al. Gaze strategies during planning in first-episode psychosis. J Abnorm Psychol. 116 (3), 589(2007).

- Irving, E. L., Steinbach, M. J., Lillakas, L., Babu, R. J., Hutchings, N. Horizontal saccade dynamics across the human life span. Invest Opth Vis Sci. 47 (6), 2478-2484 (2006).

- Yep, R., et al. Interleaved pro/anti-saccade behavior across the lifespan. Front Aging Neurosci. 14, 842549(2022).

- Manoel, E. D. J., Connolly, K. J. Variability and the development of skilled actions. Int J Psychophys. 19 (2), 129-147 (1995).

Access restricted. Please log in or start a trial to view this content.

Reprints and Permissions

Request permission to reuse the text or figures of this JoVE article

Request PermissionExplore More Articles

This article has been published

Video Coming Soon

Copyright © 2025 MyJoVE Corporation. All rights reserved