A subscription to JoVE is required to view this content. Sign in or start your free trial.

Method Article

Quantification of Visual Feature Selectivity of the Optokinetic Reflex in Mice

In This Article

Summary

Here, we describe a standard protocol for quantifying the optokinetic reflex. It combines virtual drum stimulation and video-oculography, and thus allows precise evaluation of the feature selectivity of the behavior and its adaptive plasticity.

Abstract

The optokinetic reflex (OKR) is an essential innate eye movement that is triggered by the global motion of the visual environment and serves to stabilize retinal images. Due to its importance and robustness, the OKR has been used to study visual-motor learning and to evaluate the visual functions of mice with different genetic backgrounds, ages, and drug treatments. Here, we introduce a procedure for evaluating OKR responses of head-fixed mice with high accuracy. Head fixation can rule out the contribution of vestibular stimulation on eye movements, making it possible to measure eye movements triggered only by visual motion. The OKR is elicited by a virtual drum system, in which a vertical grating presented on three computer monitors drifts horizontally in an oscillatory manner or unidirectionally at a constant velocity. With this virtual reality system, we can systematically change visual parameters like spatial frequency, temporal/oscillation frequency, contrast, luminance, and the direction of gratings, and quantify tuning curves of visual feature selectivity. High-speed infrared video-oculography ensures accurate measurement of the trajectory of eye movements. The eyes of individual mice are calibrated to provide opportunities to compare the OKRs between animals of different ages, genders, and genetic backgrounds. The quantitative power of this technique allows it to detect changes in the OKR when this behavior plastically adapts due to aging, sensory experience, or motor learning; thus, it makes this technique a valuable addition to the repertoire of tools used to investigate the plasticity of ocular behaviors.

Introduction

In response to visual stimuli in the environment, our eyes move to shift our gaze, stabilize retinal images, track moving targets, or align the foveae of two eyes with targets located at different distances from the observer, which are vital to proper vision1,2. Oculomotor behaviors have been widely used as attractive models of sensorimotor integration to understand the neural circuits in health and disease, at least partly because of the simplicity of the oculomotor system3. Controlled by three pairs of extraocular muscles, the eye rotates in the socket primarily around three corresponding axes: elevation and depression along the transverse axis, adduction and abduction along the vertical axis, and intorsion and extorsion along the anteroposterior axis1,2. Such a simple system allows researchers to evaluate the oculomotor behaviors of mice easily and accurately in a lab environment.

One prime oculomotor behavior is the optokinetic reflex (OKR). This involuntary eye movement is triggered by slow drifts or slips of images on the retina and serves to stabilize retinal images as an animal's head or its surroundings move2,4. The OKR, as a behavioral paradigm, is interesting to researchers for several reasons. First, it can be stimulated reliably and quantified accurately5,6. Second, the procedures of quantifying this behavior are relatively simple and standardized and can be applied to evaluate the visual functions of a large cohort of animals7. Third, this innate behavior is highly plastic5,8,9. Its amplitude can be potentiated when repetitive retinal slips occur for a long time5,8,9, or when its working partner vestibular ocular reflex (VOR), another mechanism of stabilizing retinal images triggered by vestibular input2, is impaired5. These experimental paradigms of OKR potentiation empower researchers to unveil the circuit basis underlying oculomotor learning.

Two non-invasive methods have primarily been used to evaluate the OKR in previous studies: (1) video-oculography combined with a physical drum7,10,11,12,13 or (2) arbitrary determination of head turns combined with a virtual drum6,14,15,16. Although their applications have made fruitful discoveries in understanding the molecular and circuit mechanisms of oculomotor plasticity, these two methods each have some drawbacks which limit their powers in quantitatively examining the properties of the OKR. First, physical drums, with printed patterns of black and white stripes or dots, do not allow easy and quick changes of visual patterns, which largely restricts the measurement of the dependence of the OKR on certain visual features, such as spatial frequency, direction, and contrast of moving gratings8,17. Instead, tests of the selectivity of the OKR to these visual features can benefit from computerized visual stimulation, in which visual features can be conveniently modified from trial to trial. In this way, researchers can systematically examine the OKR behavior in the multi-dimensional visual parameter space. Moreover, the second method of the OKR assay reports only the thresholds of visual parameters that trigger discernible OKRs, but not the amplitudes of eye or head movements6, 14,15,16. The lack of quantitative power thus prevents analyzing the shape of tuning curves and the preferred visual features, or detecting subtle differences between individual mice in normal and pathological conditions. To overcome the above limitations, video-oculography and computerized virtual visual stimulation had been combined to assay the OKR behavior in recent studies5,17,18,19,20. However, these previously published studies did not provide enough technical details or step-by-step instructions, and consequently it is still challenging for researchers to establish such an OKR test for their own research.

Here, we present a protocol to precisely quantify the visual feature selectivity of OKR behavior under photopic or scotopic conditions with the combination of video-oculography and computerized virtual visual stimulation. Mice are head-fixed to avoid the eye movement evoked by vestibular stimulation. A high-speed camera is used to record the ocular movements from mice viewing moving gratings with changing visual parameters. The physical size of the eyeballs of individual mice is calibrated to ensure the accuracy of deriving the angle of eye movements21. This quantitative method allows comparing OKR behavior between animals of different ages or genetic backgrounds, or monitoring its change caused by pharmacological treatments or visual-motor learning.

Protocol

All experimental procedures performed in this study were approved by the Biological Sciences Local Animal Care Committee, in accordance with guidelines established by the University of Toronto Animal Care Committee and the Canadian Council on Animal Care.

1. Implantation of a head bar on top of the skull

NOTE: To avoid the contribution of VOR behavior to the eye movements, the head of the mouse is immobilized during the OKR test. Therefore, a head bar is surgically implanted on top of the skull.

- Anesthetize a mouse (2-5-month-old female and male C57BL/6) by a mixture of 4% isoflurane (v/v) and O2 in a gas chamber. Transfer the mouse to a customized surgery platform and reduce the concentration of isoflurane to 1.5%-2%. Monitor the depth of anesthesia by checking the toe-pinch response and the respiration rate throughout the surgery.

- Place a heating pad underneath the animal's body to maintain its body temperature. Apply a layer of lubricant eye ointment to both eyes to protect them from drying. Cover the eyes with aluminum foil to protect them from light illumination.

- Subcutaneously inject carprofen at a dose of 20 mg/kg to reduce pain. After wetting the fur with chlorhexidine gluconate skin cleaner, shave the fur on top of the skull. Disinfect the exposed scalp with 70% isopropyl alcohol and chlorhexidine alcohol twice.

- Inject bupivacaine (8 mg/kg) subcutaneously at the site of incision, then remove the scalp (~1 cm2) with scissors to expose the dorsal surface of the skull, including the posterior frontal bone, parietal bone, and interparietal bone.

- Apply several drops of 1% lidocaine and 1:100,000 epinephrine on the exposed skull to reduce local pain and bleeding. Scrape the skull with a Meyhoefer curette to remove the fascia and clean it with phosphate-buffered saline (PBS).

NOTE: The temporalis muscle is separated from the skull to increase the surface area for a head bar to attach. - Dry the skull by gently blowing compressed air toward the skull surface until the moisture is gone and the bone turns whitish. Apply a thin layer of superglue on the exposed surface of the skull, including the edge of the cut scalp, followed by a layer of acrylic resin.

NOTE: The surface of the skull needs to be free of blood or water before the application of superglue. - Place a stainless-steel head bar (see Figure 1A) along the midline on top of the skull. Apply more acrylic resin, starting from the edge of the head bar until the base of the head bar is completely embedded in the acrylic resin. Apply acrylic resin two or three times to build up the thickness.

- Wait for about 15 min until the acrylic resin hardens. Subcutaneously inject 1 mL of lactated ringer's solution. Then, return the mouse to a cage placed on a heating pad until the animal is fully mobile.

- Allow the mouse to recover in the home cage for at least 5 days after surgery. Once the animal is in good shape, fix its head with the head bar in the OKR setup for 15-30 min to familiarize it with the head fixation and the experimental environment. Repeat the familiarization once a day for at least 3 days.

2. Setup of the virtual drum and video-oculography

- Mount three monitors orthogonally to each other to form a square enclosure that covers ~270° of the azimuth and 63° of the elevation in the visual space (Figure 1B left).

- With a discrete graphic card, merge the three monitors into a simple display to ensure synchronization across all the monitors.

- Calibrate the luminance of monitors as described below.

- Turn on the computer to which the monitors are connected and wait for 15 min. The warm-up is essential to have stable luminance.

- Systematically change the brightness setting on the monitor from 0 to 100 by steps of 25.

- For each brightness value, measure the luminance of the monitors under various pixel values (0-255, steps of 15) with a luminance meter.

- Fit the relationship between luminance and brightness for pixel value 255 with linear regression and estimate the brightness value that gives rise to 160 cd/m2.

- For each pixel value used in the luminance measurement (step 2.3.3), estimate the luminance for the brightness value derived in step 2.3.4 based on linear regression. Use the power function lum = A * pixelγ to fit the relationship between the new set of luminance values (under the brightness value derived in 2.3.4) and their corresponding pixel values to derive the gamma factor γ and the coefficient A. These will be used to generate sinusoidal gratings of desired luminance values.

- Set the brightness of all three monitors to the values derived in step 2.3.4 to ensure their luminance values are the same for the same pixel value.

- Generate a virtual drum, which is used to stimulate OKR behavior, with the visual stimulation toolkit, as described below.

- Present a vertical sinusoidal grating on the monitors and adjust the period (spacing between stripes) along the azimuth to ensure the projection of the grating on the eye has constant spatial frequency (drum grating; Figure 1B middle and right).

- Ensure the animal head-fixed at the center of the enclosure so that it sees the grating has a constant spatial frequency across the surface of the virtual drum.

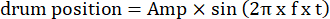

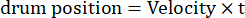

- Modify the parameters of moving grating, such as the oscillatory amplitude, spatial frequency, temporal/oscillation frequency, direction, contrast, etc., in the visual stimulation codes. Use two types of visual motion: (1) the grating drifts clockwise or counterclockwise in an oscillatory manner following a sinusoidal function:

Here, Amp is the amplitude of drum trajectory, f is the oscillation frequency, and t is the time (oscillation amplitude: 5°; grating spatial frequency: 0.04-0.45 cpd; oscillation frequency: 0.1-0.8 Hz, corresponding to a peak velocity of the stimulus of 3.14-25.12 °/s [drum velocity = Amp x 2π x f x cos (2π x f x t); contrast: 80%-100%; mean luminance: 35-45 cd/m2; (2) the grating drifts unidirectionally at a constant velocity:

(Spatial frequency: 0.04-0.64 cpd; temporal frequency: 0.25-1 Hz; drum velocity = temporal frequency/spatial frequency.)

- Set up the video-oculography as described below.

- To avoid blockage of animal's visual field, place an infrared (IR) mirror 60° from the midline to form an image of the right eye.

- Place an IR camera on the right side behind the mouse (Figure 1C left) to capture an image of the right eye.

- Mount the high-speed IR camera on a camera arm that allows the camera to rotate by ± 10° around the image of the right eye (Figure 1C right).

- Use a photodiode attached to one of the monitors to provide an electrical signal to synchronize the timing of video-oculography and visual stimulation.

- Place four IR light emitting diodes (LEDs) supported by gooseneck arms around the right eye to provide IR illumination of the eye.

- Place two IR LEDs on the camera to provide corneal reflection (CR) references: one is fixed above the camera (X-CR), while the other is on the left side of the camera (Y-CR; Figure 1D).

- Measure the optical magnification of the video-oculography system with a calibration slide.

NOTE: The reference CRs are used to cancel out the translational eye movements when the eye angle is calculated based on the rotational eye movements.

- Fix the animal's head at the center of the enclosure formed by the monitors, as described below.

- Fix the animal's head with the head plate to the center of the rig and make it face forward. Adjust the tilt of the head so that the left and right eyes are leveled, and the nasal and temporal corners of the eyes are aligned horizontally (Figure 1E).

- Move the animal's head horizontally by coarse adjustment provided by the head-fixation apparatus and fine adjustment provided by a 2D translational stage, and vertically through the head-fixation apparatus and a post/post holder pair, until the animal's right eye appears in the live video of the camera. Before the calibration and measurement of eye movements, overlay the image of the animal's right eye reflected by the hot mirror with the pivot point of the camera arm (see details in step 3.4 below).

- Build a customized enclosure around the OKR rig to block room light (Figure 1F).

3. Calibration of eye movements

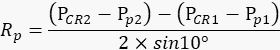

NOTE: Rotational eye movements are calculated based on movements of the pupil and the radius of the orbit of the pupillary movements (Rp, the distance from the center of the pupil to the center of the eyeball). For each individual mouse, this radius is measured experimentally21.

- Fix the animal's head at the center of the enclosure formed by the three monitors, as described in step 2.6.1.

- Turn the camera on and adjust the four LEDs surrounding the right eye to achieve uniform IR illumination.

- Under visual guidance, adjust the position of the right eye until it appears at the center of the video, as described in step 2.6.2.

- Align the virtual image of the right eye with the pivot point of the camera arm, as described below.

- Manually rotate the camera arm to the left extreme end (-10°). Manually move the animal's right eye position on the horizontal plane perpendicular to the optical axis with fine adjustment of the 2D translational stage (Figure 1C, green arrow), until the X-CR is at the horizontal center of the image.

- Manually rotate the camera arm to the other end (+10°). If the X-CR runs away from the center of the image, move the right eye along the optical axis with fine adjustment until the X-CR comes to the center (Figure 1C, blue arrow).

- Repeat steps 3.4.1-3.4.2 a few times until the X-CR stays at the center when the camera arm swings leftward and rightward. If the right eye moves in the middle of one repetition, restart the adjustment process.

- Measure the vertical distance between the Y-CR and X-CR after locking the camera arm at the central position. Turn the Y-CR LED on and record its position on the video, and then switch to the X-CR LED and record its position.

NOTE: The vertical distance between the Y-CR and X-CR will be used to derive the position of the Y-CR during the measurement of eye movements in which only the X-CR LED is turned on. - Measure the radius of pupil rotation Rp, as described below.

- Rotate the camera arm to the left end (-10°) and record the positions of the pupil (Pp1) and X-CR (PCR1) on the video.

- Then, rotate the camera arm to the right end (+10°) and record the positions of the pupil (Pp2) and X-CR (PCR2) on the video. Repeat this step multiple times.

NOTE: The animal's right eye needs to remain stationary during each repetition so that the amount of pupil movements in the movie accurately reflects the degree of swinging the camera arm. - Based on the values recorded above, calculate the radius of pupil rotation Rp (Figure 2A) with the following formula:

NOTE: The distance between the corneal reflection and pupil center in the physical space is calculated based on their distance in the movie:

PCR - Pp = number of pixels in the movie x pixel size of camera chip x magnification

- Develop the relationship between Rp and pupil diameter, as described below. Rp changes when the pupil dilates or constricts; proximately, its value is inversely proportional to the pupil size (Figure 2B top).

- Change the luminance of the monitors systematically from 0 to 160 cd/m2 to regulate the pupil size.

- For each luminance value, repeat step 3.6 8-10 times and record the diameter of the pupil.

- Apply linear regression to the relationship between Rp and pupil diameter based on the values measured above to derive the slope and intercept (Figure 2B bottom).

NOTE: The outliers caused by occasional eye movements are removed before the linear fitting. For repetitive measurements in multiple sessions, the calibration needs to be done only once for one animal, unless its eye grows bigger during the experiment.

4. Record eye movements of the OKR

- Head-fix a mouse in the rig following steps 3.1-3.4. Skip this step if the recording occurs right after the calibration is done. Lock the camera arm at the central position.

- Set up the monitors and the animal for scotopic OKR as described below. Skip this step for photopic OKR.

- Cover the screen of each monitor with a customized filter, which is made of five layers of 1.2 neutral density (ND) film. Make sure no light leaks out through the gap between the filter and the monitor.

- Turn the room light off. The following steps are done with the aid of an IR goggle.

- Apply one drop of pilocarpine solution (2% in saline) to the right eye and wait 15 min. Ensure the drop stays on the eye and is not wiped away by the mouse. If the solution is wiped away by the animal, apply another drop of pilocarpine solution. This shrinks the pupil to a proper size for eye tracking under the scotopic condition.

NOTE: Under the scotopic condition, the pupil dilates substantially so that its edge is partially hidden behind the eyelid. This affects the precision of estimating the pupil center by video-oculography. Pharmacologically shrinking the pupil of the right eye diminishes its visual input, and thus the visual stimuli are presented to the left eye. - Rinse the right eye with saline to thoroughly wash away the pilocarpine solution. Pull down the curtain to completely seal the enclosure, which prevents stray light from interfering with the scotopic vision.

- Give the animal 5 min to fully accommodate to the scotopic environment before starting the OKR test.

- Run the visual stimulation software and the eye tracking software. For photopic OKR measurement, ensure the drum grating oscillates horizontally with a sinusoidal trajectory; for scotopic OKR measurement, ensure the drum grating drifts at a constant velocity from left to right, which is the temporo-nasal direction in reference to the left eye.

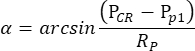

NOTE: When the pupil of the right eye, but not of the left eye, is shrunk by pilocarpine under the scotopic condition, the OKR elicited by oscillatory drum stimulation is highly asymmetric. Thus, for scotopic OKR measurement, the left eye is stimulated while the movement of the right eye is monitored. - The eye tracking software automatically measures the pupil size, CR position, and pupil position for each frame, and computes the angle of eye position based on the following formula (Figure 2C):

Here, PCR is the CR position, Pp is the pupil position, and Rp is the radius of pupil rotation. The distance between corneal reflection and pupil center in the physical space is calculated based on their distance in the movie:

PCR - Pp = number of pixels in the movie x pixel size of camera chip x magnification

Rp of the corresponding pupil size is derived based on the linear regression model in step 3.7.3 (Figure 2B bottom).

5. Analysis of eye movements of the OKR with the eye analysis software

- Process the eye traces using a median filter (filter window = 0.05 s) to remove high frequency noise (Figure 3A middle).

- Remove the saccades or nystagmus as described below.

- Estimate the eye velocity by calculating the first order derivative of eye movements (Figure 3A bottom). Identify the saccades or nystagmus by applying a velocity threshold of 50 °/s (Figure 3A bottom).

- Replace the saccades or nystagmus by extrapolating the eye positions during these fast eye movements from the segment before the saccades or nystagmus based on linear regression (Figure 3B).

- Compute the amplitude of OKR eye movements by fast Fourier transform (Goertzel algorithm) if the drum grating oscillates (Figure 3C), or compute the average velocity of eye movements during the visual stimulation if the drum grating moves at a constant velocity in one direction (Figure 3B bottom).

NOTE: The amplitude of oscillatory eye movements derived from Fourier transform is similar to the amplitude derived from the fitting of the eye trajectory with a sinusoidal function (Figure 3D). - Calculate the OKR gain. For oscillatory drum motion, OKR gain is defined as the ratio of the amplitude of eye movements to the amplitude of drum movements (Figure 3C right). For unidirectional drum motion, OKR gain is defined as the ratio of eye velocity to drum grating velocity (Figure 3B bottom).

Results

With the procedure detailed above, we evaluated the dependence of the OKR on several visual features. The example traces shown here were derived using the analysis codes provided in Supplementary Coding File 1, and the example traces raw file can be found in Supplementary Coding File 2. When the drum grating drifted in a sinusoidal trajectory (0.4 Hz), the animal's eye automatically followed the movement of the grating in a similar oscillatory manner (Figure 3B

Discussion

The method of the OKR behavioral assay presented here provides several advantages. First, computer-generated visual stimulation solves the intrinsic issues of physical drums. Dealing with the issue that physical drums do not support the systematic examination of spatial frequency, direction, or contrast tuning8, the virtual drum allows these visual parameters to be changed on a trial-by-trial basis, thus facilitating a systematic and quantitative analysis of the feature selectivity of the OKR beha...

Disclosures

The authors declare no competing interests.

Acknowledgements

We are thankful to Yingtian He for sharing data of direction tuning. This work was supported by grants from the Canadian Foundation of Innovation and Ontario Research Fund (CFI/ORF project no. 37597), NSERC (RGPIN-2019-06479), CIHR (Project Grant 437007), and Connaught New Researcher Awards.

Materials

| Name | Company | Catalog Number | Comments |

| 2D translational stage | Thorlabs | XYT1 | |

| Acrylic resin | Lang Dental | B1356 | For fixing headplate on skull and protecting skull |

| Bupivacaine | STERIMAX | ST-BX223 | Bupivacaine Injection BP 0.5%. Local anesthesia |

| Carprofen | RIMADYL | 8507-14-1 | Analgesia |

| Compressed air | Dust-Off | ||

| Eye ointment | Alcon | Systane | For maintaining moisture of eyes |

| Graphic card | NVIDIA | Geforce GTX 1650 or Quadro P620. | For generating single screen among three monitors |

| Heating pad | Kent Scientific | HTP-1500 | For maintaining body temperature |

| High-speed infrared (IR) camera | Teledyne Dalsa | G3-GM12-M0640 | For recording eye rotation |

| IR LED | Digikey | PDI-E803-ND | For CR reference and the illumination of the eye |

| IR mirror | Edmund optics | 64-471 | For reflecting image of eye |

| Isoflurane | FRESENIUS KABI | CP0406V2 | |

| Labview | National instruments | version 2014 | eye tracking |

| Lactated ringer | BAXTER | JB2324 | Water and energy supply |

| Lidocaine and epinephrine mix | Dentsply Sirona | 82215-1 | XYLOCAINE. Local anesthesia |

| Luminance Meter | Konica Minolta | LS-150 | for calibration of monitors |

| Matlab | MathWorks | version xxx | analysis of eye movements |

| Meyhoefer Curette | World Precision Instruments | 501773 | For scraping skull and removing fascia |

| Microscope calibration slide | Amscope | MR095 | to measure the magnification of video-oculography |

| Monitors | Acer | B247W | Visual stimulation |

| Neutral density filter | Lee filters | 299 | to generate scotopic visual stimulation |

| Nigh vision goggle | Alpha optics | AO-3277 | for scotopic OKR |

| Photodiode | Digikey | TSL254-R-LF-ND | to synchronize visual stimulation and video-oculography |

| Pilocarpine hydrochloride | Sigma-Aldrich | P6503 | |

| Post | Thorlabs | TR1.5 | |

| Post holder | Thorlabs | PH1 | |

| PsychoPy | open source software | version xxx | visual stimulation toolkit |

| Scissor | RWD | S12003-09 | For skin removal |

| Superglue | Krazy Glue | Type: All purpose. For adhering headplate on the skull |

References

- Gerhard, D. Neuroscience. 5th Edition. Yale Journal of Biology and Medicine. , (2013).

- Distler, C., Hoffmann, K. P. . The Oxford Handbook of Eye Movement. , 65-83 (2011).

- Sereno, A. B., Bolding, M. S. . Executive Functions: Eye Movements and Human Neurological Disorders. , (2017).

- Giolli, R. A., Blanks, R. H. I., Lui, F. The accessory optic system: basic organization with an update on connectivity, neurochemistry, and function. Progress in Brain Research. 151, 407-440 (2006).

- Liu, B. H., Huberman, A. D., Scanziani, M. Cortico-fugal output from visual cortex promotes plasticity of innate motor behaviour. Nature. 538 (7625), 383-387 (2016).

- Prusky, G. T., Alam, N. M., Beekman, S., Douglas, R. M. Rapid quantification of adult and developing mouse spatial vision using a virtual optomotor system. Investigative Ophthalmology & Visual Science. 45 (12), 4611-4616 (2004).

- Stahl, J. S., van Alphen, A. M., De Zeeuw, C. I. A comparison of video and magnetic search coil recordings of mouse eye movements. Journal of Neuroscience Methods. 99 (1-2), 101-110 (2000).

- Faulstich, B. M., Onori, K. A., du Lac, S. Comparison of plasticity and development of mouse optokinetic and vestibulo-ocular reflexes suggests differential gain control mechanisms. Vision Research. 44 (28), 3419-3427 (2004).

- Katoh, A., Kitazawa, H., Itohara, S., Nagao, S. Dynamic characteristics and adaptability of mouse vestibulo-ocular and optokinetic response eye movements and the role of the flocculo-olivary system revealed by chemical lesions. Proceedings of the National Academy of Sciences. 95 (13), 7705-7710 (1998).

- Cahill, H., Nathans, J. The optokinetic reflex as a tool for quantitative analyses of nervous system function in mice: application to genetic and drug-induced variation. PLoS One. 3 (4), 2055 (2008).

- Cameron, D. J., et al. The optokinetic response as a quantitative measure of visual acuity in zebrafish. Journal of Visualized Experiments. (80), 50832 (2013).

- de Jeu, M., De Zeeuw, C. I. Video-oculography in mice. Journal of Visualized Experiments. (65), e3971 (2012).

- Kodama, T., du Lac, S. Adaptive acceleration of visually evoked smooth eye movements in mice. The Journal of Neuroscience. 36 (25), 6836-6849 (2016).

- Doering, C. J., et al. Modified Ca(v)1.4 expression in the Cacna1f(nob2) mouse due to alternative splicing of an ETn inserted in exon 2. PLoS One. 3 (7), e2538 (2008).

- Shi, C., et al. Optimization of optomotor response-based visual function assessment in mice. Scientific Reports. 8 (1), 9708 (2018).

- Waldner, D. M., et al. Transgenic expression of Cacna1f rescues vision and retinal morphology in a mouse model of congenital stationary night blindness 2A (CSNB2A). Translational Vision Science & Technology. 9 (11), 19 (2020).

- Tabata, H., Shimizu, N., Wada, Y., Miura, K., Kawano, K. Initiation of the optokinetic response (OKR) in mice. Journal of Vision. 10 (1), 1-17 (2010).

- Al-Khindi, T., et al. The transcription factor Tbx5 regulates direction-selective retinal ganglion cell development and image stabilization. Current Biology. 32 (19), 4286-4298 (2022).

- Harris, S. C., Dunn, F. A. Asymmetric retinal direction tuning predicts optokinetic eye movements across stimulus conditions. eLife. 12, e81780 (2023).

- van Alphen, B., Winkelman, B. H., Frens, M. A. Three-dimensional optokinetic eye movements in the C57BL/6J mouse. Investigative Ophthalmology & Visual Science. 51 (1), 623-630 (2010).

- Stahl, J. S. Calcium channelopathy mutants and their role in ocular motor research. Annals of the New York Academy of Sciences. 956, 64-74 (2002).

- Endo, S., et al. Dual involvement of G-substrate in motor learning revealed by gene deletion. Proceedings of the National Academy of Sciences. 106 (9), 3525-3530 (2009).

- Thomas, B. B., Seiler, M. J., Sadda, S. R., Coffey, P. J., Aramant, R. B. Optokinetic test to evaluate visual acuity of each eye independently. Journal of Neuroscience Methods. 138 (1-2), 7-13 (2004).

- Burroughs, S. L., Kaja, S., Koulen, P. Quantification of deficits in spatial visual function of mouse models for glaucoma. Investigative Ophthalmology & Visual Science. 52 (6), 3654-3659 (2011).

- Wakita, R., et al. Differential regulations of vestibulo-ocular reflex and optokinetic response by β- and α2-adrenergic receptors in the cerebellar flocculus. Scientific Reports. 7 (1), 3944 (2017).

- Dehmelt, F. A., et al. Spherical arena reveals optokinetic response tuning to stimulus location, size, and frequency across entire visual field of larval zebrafish. eLife. 10, e63355 (2021).

- Magnusson, M., Pyykko, I., Jantti, V. Effect of alertness and visual attention on optokinetic nystagmus in humans. American Journal of Otolaryngology. 6 (6), 419-425 (1985).

- Collins, W. E., Schroeder, D. J., Elam, G. W. Effects of D-amphetamine and of secobarbital on optokinetic and rotation-induced nystagmus. Aviation, Space, and Environmental Medicine. 46 (4), 357-364 (1975).

- Reimer, J., et al. Pupil fluctuations track fast switching of cortical states during quiet wakefulness. Neuron. 84 (2), 355-362 (2014).

- Sakatani, T., Isa, T. PC-based high-speed video-oculography for measuring rapid eye movements in mice. Neuroscience Research. 49 (1), 123-131 (2004).

- Sakatani, T., Isa, T. Quantitative analysis of spontaneous saccade-like rapid eye movements in C57BL/6 mice. Neuroscience Research. 58 (3), 324-331 (2007).

- Vinck, M., Batista-Brito, R., Knoblich, U., Cardin, J. A. Arousal and locomotion make distinct contributions to cortical activity patterns and visual encoding. Neuron. 86 (3), 740-754 (2015).

- Bradley, M. M., Miccoli, L., Escrig, M. A., Lang, P. J. The pupil as a measure of emotional arousal and autonomic activation. Psychophysiology. 45 (4), 602-607 (2008).

- Hess, E. H., Polt, J. M. Pupil size as related to interest value of visual stimuli. Science. 132 (3423), 349-350 (1960).

- Di Stasi, L. L., Catena, A., Canas, J. J., Macknik, S. L., Martinez-Conde, S. Saccadic velocity as an arousal index in naturalistic tasks. Neuroscience and Biobehavioral Reviews. 37 (5), 968-975 (2013).

Reprints and Permissions

Request permission to reuse the text or figures of this JoVE article

Request PermissionThis article has been published

Video Coming Soon

Copyright © 2025 MyJoVE Corporation. All rights reserved